arXiv: 2604.18364 · PDF

Authors: Ravidu Suien Rammuni Silva, Ahmad Lotfi, Isibor Kennedy Ihianle, Golnaz Shahtahmassebi, Jordan J. Bird

Primary category: cs.AI · all: cs.AI, cs.GR, cs.MA

Matched keywords: large language model, llm, agent, agentic, reasoning, inference, fine-tun

TL;DR

The paper introduces ManimTrainer (SFT + GRPO with fused code/visual rewards) and ManimAgent (Renderer-in-the-loop inference with API-doc augmentation) for text-to-code-to-video Manim animation. A Qwen 3 Coder 30B variant hits 94% render success and 85.7% visual similarity, beating GPT-4.1.

Key Ideas

- First unified study of training × inference strategies for Manim animation generation.

- Unified reward fusing code-level and visual-level signals for RL.

- Renderer-in-the-loop (RITL) and API-doc-augmented RITL (RITL-DOC) for agentic self-correction.

- SFT improves code quality; GRPO improves visuals and responsiveness to extrinsic feedback.

Approach

ManimTrainer pipelines SFT followed by GRPO, using a composite reward combining static code assessment and rendered-video visual assessment. ManimAgent wraps inference with a Manim renderer feedback loop (RITL), optionally augmented with retrieved API documentation (RITL-DOC), enabling iterative self-correction of generated code before final render.

Experiments

Benchmarks 17 open-source sub-30B LLMs across 9 combinations of training (base, SFT, SFT+GRPO) and inference (direct, RITL, RITL-DOC) strategies on ManimBench. Metrics: Render Success Rate (RSR) and Visual Similarity (VS) to reference videos. GPT-4.1 serves as a baseline.

Results

Best configuration — Qwen 3 Coder 30B + GRPO + RITL-DOC — achieves 94% RSR and 85.7% VS, exceeding GPT-4.1 by +3 pp on VS. SFT strengthens code–visual metric correlation; inference-time enhancements weaken it, suggesting training and agentic inference contribute orthogonally.

Why It Matters

Shows that small open-source coders, when combined with visual-reward RL and renderer-grounded agentic loops, can surpass a frontier closed model on a domain-specific code-to-video task — a recipe transferable to other programmatic generation domains (CAD, plots, simulations).

Connections to Prior Work

Builds on GRPO (DeepSeek-R1 line), code-SFT pipelines, execution-feedback agents (Reflexion, Self-Debug), retrieval-augmented code generation, and prior Manim/video-code benchmarks. The visual reward echoes RLHF-with-rendered-output work in diagram and UI generation.

Open Questions

- Does the approach scale to >30B models or proprietary APIs?

- How robust is the visual similarity metric against stylistic but semantically correct variations?

- Why do inference-time enhancements decouple code and visual metrics — does this indicate reward hacking?

- Generalisation beyond Manim (e.g., Blender, p5.js) untested.

- Cost/latency of RITL-DOC versus quality gains not reported.

Figures

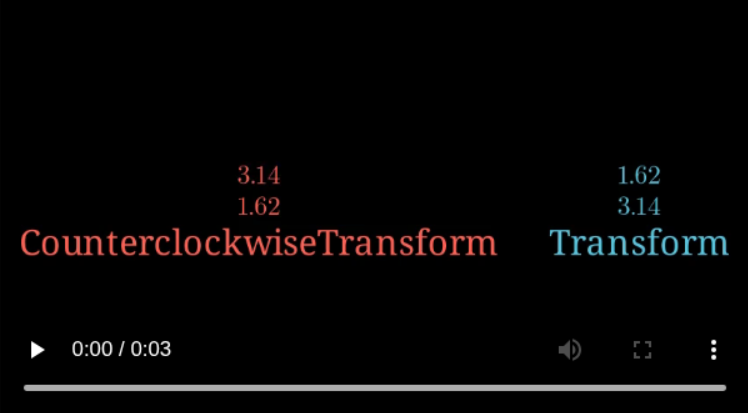

Figure 1: Figure 1 (extracted from PDF)

Figure 2: Figure 2 (extracted from PDF)

Figure 3: Figure 3 (extracted from PDF)

Original abstract

Generating programmatic animation using libraries such as Manim presents unique challenges for Large Language Models (LLMs), requiring spatial reasoning, temporal sequencing, and familiarity with domain-specific APIs that are underrepresented in general pre-training data. A systematic study of how training and inference strategies interact in this setting is lacking in current research. This study introduces ManimTrainer, a training pipeline that combines Supervised Fine-tuning (SFT) with Reinforcement Learning (RL) based Group Relative Policy Optimisation (GRPO) using a unified reward signal that fuses code and visual assessment signals, and ManimAgent, an inference pipeline featuring Renderer-in-the-loop (RITL) and API documentation-augmented RITL (RITL-DOC) strategies. Using these techniques, this study presents the first unified training and inference study for text-to-code-to-video transformation with Manim. It evaluates 17 open-source sub-30B LLMs across nine combinations of training and inference strategies using ManimBench. Results show that SFT generally improves code quality, while GRPO enhances visual outputs and increases the models’ responsiveness to extrinsic signals during self-correction at inference time. The Qwen 3 Coder 30B model with GRPO and RITL-DOC achieved the highest overall performance, with a 94% Render Success Rate (RSR) and 85.7% Visual Similarity (VS) to reference videos, surpassing the baseline GPT-4.1 model by +3 percentage points in VS. Additionally, the analysis shows that the correlation between code and visual metrics strengthens with SFT and GRPO but weakens with inference-time enhancements, highlighting the complementary roles of training and agentic inference strategies in Manim animation generation.