arXiv: 2604.18529 · PDF

Authors: Mao Lin, Xi Wang, Guilherme Cox, Dong Li, Hyeran Jeon

Primary category: cs.PF · all: cs.DC, cs.PF

Matched keywords: llm, rag, inference, kv cache, parallelism, attention, gpu, scheduler

TL;DR

HybridGen is a CPU-GPU hybrid attention framework for long-context LLM inference that leverages CXL-expanded tiered memory. By coordinating attention computation across CPU and GPU, it outperforms six SOTA KV cache management methods by 1.41x-3.2x while preserving accuracy.

Key Ideas

- Existing KV cache pruning/offloading underutilizes hardware by computing attention on only one device.

- Tiered memory (e.g., CXL) expands CPU-local KV capacity but introduces NUMA penalties.

- Collaborative CPU-GPU attention needs new parallelism, scheduling, and data placement strategies.

- Three challenges: multi-dim attention dependencies, load imbalance with long sequences, NUMA penalty.

Approach

HybridGen introduces three mechanisms:

- Attention logit parallelism — partitions attention computation across CPU and GPU while respecting multi-dimensional dependencies.

- Feedback-driven scheduler — dynamically rebalances CPU-GPU workload as sequence length grows.

- Semantic-aware KV cache mapping — places KV entries across tiered memory (GPU HBM, local DRAM, CXL) to mitigate NUMA penalties based on access semantics.

Experiments

- 3 LLM families × 11 model sizes.

- 3 GPU platforms equipped with CXL-expanded memory.

- Compared against 6 state-of-the-art KV cache management baselines (pruning/offloading methods; specific names not listed in abstract).

- Metrics: inference throughput/latency speedup and accuracy.

Results

- 1.41x-3.2x average speedup over six SOTA KV cache management methods.

- Maintains “superior accuracy” (no numbers in abstract).

- Abstract lacks per-model or per-sequence-length breakdowns.

Why It Matters

For AI-infra practitioners deploying long-context LLMs, HybridGen shows that CXL tiered memory plus coordinated CPU-GPU attention can unlock KV capacity beyond HBM limits without sacrificing throughput — relevant for serving million-token contexts on heterogeneous servers rather than scaling GPU memory alone.

Connections to Prior Work

- KV cache offloading: FlexGen, DeepSpeed-Inference, vLLM CPU-offload.

- KV cache pruning/eviction: H2O, Scissorhands, StreamingLLM, SnapKV.

- Heterogeneous CPU-GPU inference: FlexGen, FastDecode, PowerInfer.

- Tiered/CXL memory systems: prior OS-level NUMA-aware placement and CXL memory disaggregation research.

Open Questions

- Which specific six baselines are compared, and how does HybridGen fare against each individually?

- How sensitive are gains to CXL latency/bandwidth variations across hardware generations?

- Does the scheduler generalize to batched multi-request serving, or only single-stream decoding?

- What is the accuracy delta quantitatively, and on which long-context benchmarks?

- Power/energy implications of keeping CPUs busy on attention during decode.

- Applicability to MoE or multi-modal models with different KV patterns.

Figures

Figure 1: Page 2 (rendered)

Figure 2: Page 3 (rendered)

Figure 3: Page 4 (rendered)

Original abstract

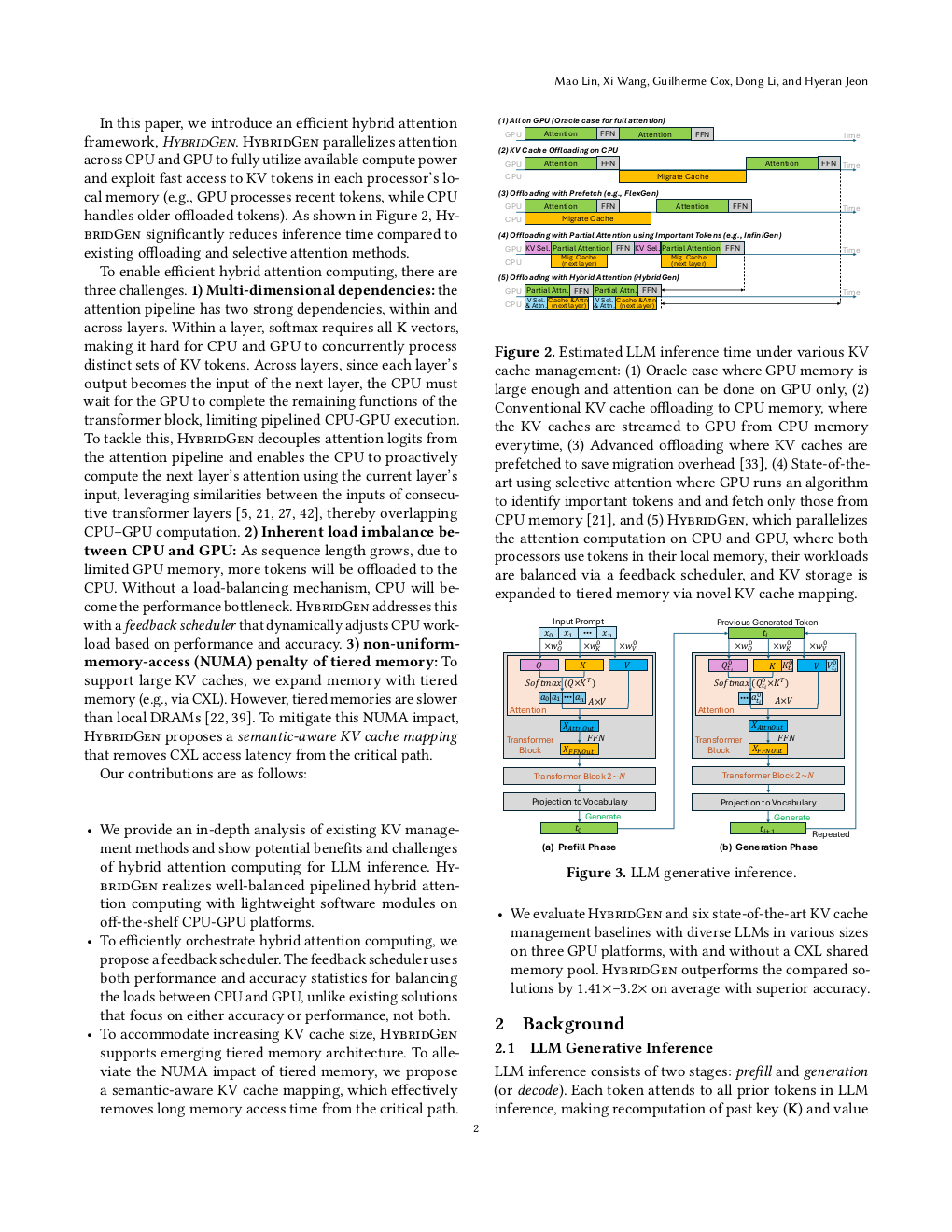

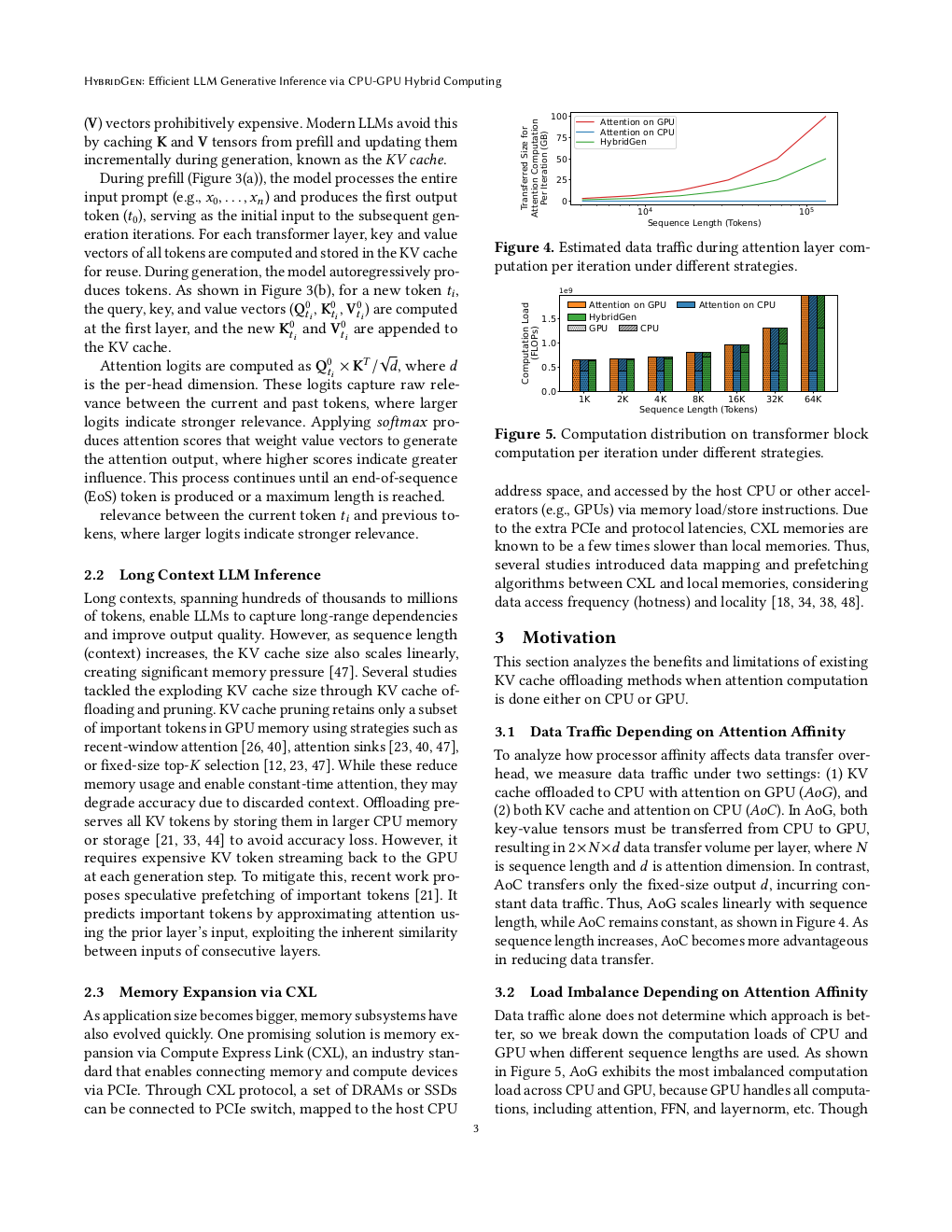

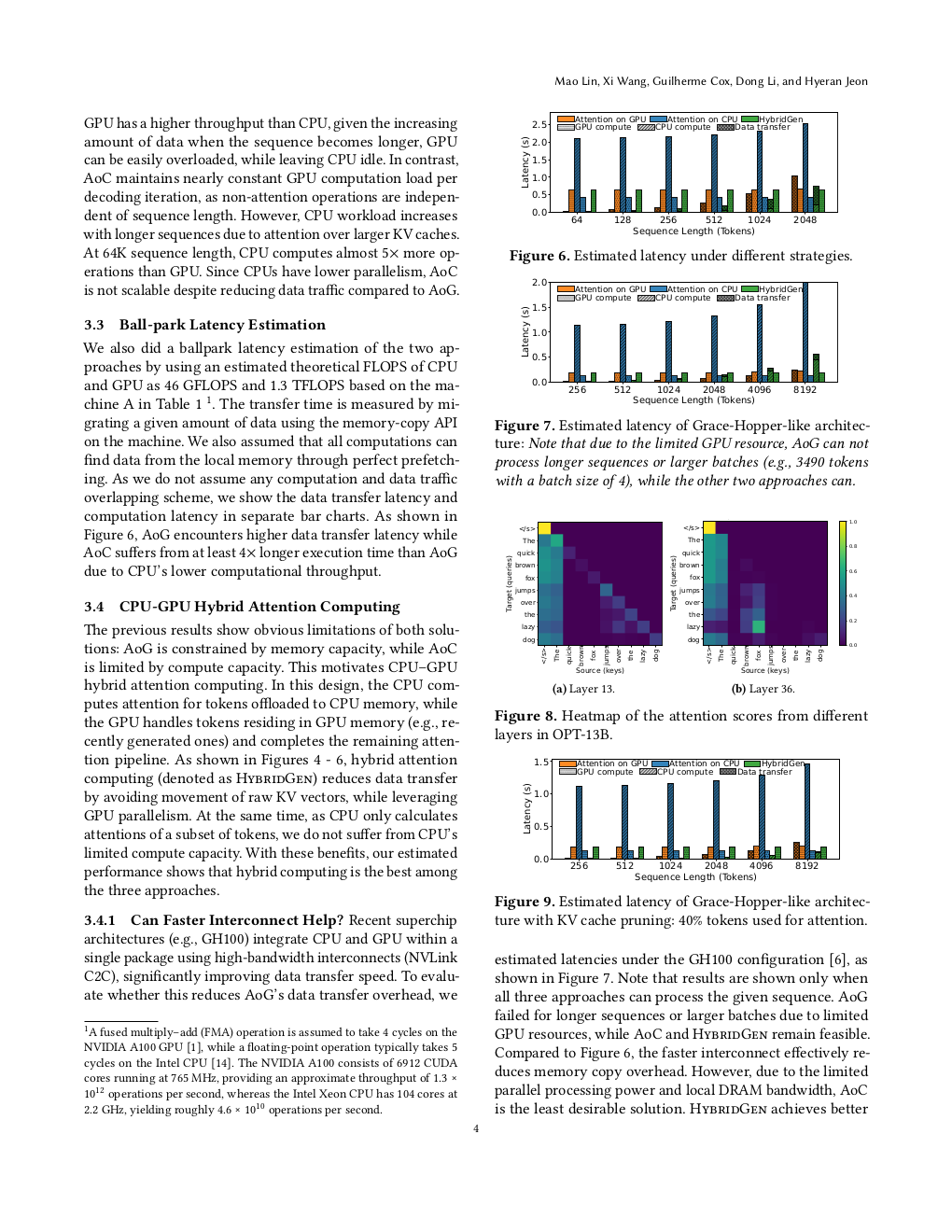

As modern LLMs support thousands to millions of tokens, KV caches grow to hundreds of gigabytes, stressing memory capacity and bandwidth. Existing solutions, such as KV cache pruning and offloading, alleviate these but underutilize hardware by relying solely on either GPU or CPU for attention computing, and considering yet limited CPU local memory for KV cache storage. We propose HybridGen, an efficient hybrid attention framework for long-context LLM inference. HybridGen enables CPU-GPU collaborative attention on systems with expanded tiered memory (e.g., CXL memory), addressing three key challenges: (1) multi-dimensional attention dependencies, (2) intensifying CPU-GPU load imbalance with longer sequences, and (3) NUMA penalty of tiered memories. HybridGen tackles these by introducing attention logit parallelism, a feedback-driven scheduler, and semantic-aware KV cache mapping. Experiments with three LLM models with eleven different sizes on three GPU platforms with a CXL-expanded memory show that HybridGen outperforms six state-of-the-art KV cache management methods by 1.41x–3.2x on average while maintaining superior accuracy.