arXiv: 2604.18396 · PDF

Authors: Yingtao Shen, An Zou

Primary category: cs.CL · all: cs.CL

Matched keywords: large language model, llm, reasoning, inference, kv cache, latency

TL;DR

River-LLM is a training-free Early Exit framework for decoder-only LLMs that solves the KV Cache Absence problem via a lightweight KV-Shared Exit River, achieving 1.71–2.16× wall-clock speedup on reasoning and code tasks without quality loss.

Key Ideas

- Identifies KV Cache Absence as the core bottleneck preventing Early Exit from delivering practical speedup in decoder-only LLMs.

- Proposes a KV-Shared Exit River: skipped layers still produce usable KV entries, avoiding recomputation or masking.

- Uses state transition similarity across decoder blocks to predict cumulative KV errors and drive per-token exit decisions.

- Training-free — drops into existing models without fine-tuning.

Approach

River-LLM adds a lightweight side path (“Exit River”) that shares/propagates KV states so that layers skipped by Early Exit still contribute KV cache entries consistent with the backbone. Exit decisions are made token-by-token using a predictor based on inter-block state transition similarity, estimating cumulative KV error and stopping when safe. No retraining is required.

Experiments

Evaluated on mathematical reasoning and code generation benchmarks (specific datasets not named in the abstract). Baselines implicitly include vanilla inference and prior Early Exit methods using recomputation or masking. Metrics: wall-clock speedup and generation quality.

Results

- 1.71×–2.16× practical speedup over full-depth inference.

- Generation quality reported as preserved (“high”), though the abstract gives no absolute accuracy numbers or baseline deltas.

Why It Matters

Closes the long-standing gap between theoretical layer-skip savings and real latency gains in decoder-only LLMs. For inference-infra practitioners, it offers a drop-in acceleration with no retraining — relevant for serving reasoning/code workloads where depth is often over-provisioned.

Connections to Prior Work

- Early Exit literature (DeeBERT, CALM, SkipDecode) — extends to decoder-only setting.

- KV cache optimization (H2O, StreamingLLM, KV compression) — complementary axis (depth vs. length).

- Adaptive computation / layer skipping (MoD, LayerSkip) — similar motivation but typically requires training; River-LLM is training-free.

- Speculative decoding — alternative wall-clock acceleration approach.

Open Questions

- Which exact datasets/models were used? Abstract omits specifics (LLaMA? Qwen? GSM8K? HumanEval?).

- How does quality degrade at the high-speedup end (2.16×)?

- Does the similarity-based error predictor generalize to long-context or non-reasoning tasks (chat, summarization)?

- Memory overhead and implementation cost of the Exit River vs. pure layer skipping.

- Interaction with speculative decoding, quantization, or tensor parallelism in production serving stacks.

Figures

Figure 1: Page 2 (rendered)

Figure 2: Page 3 (rendered)

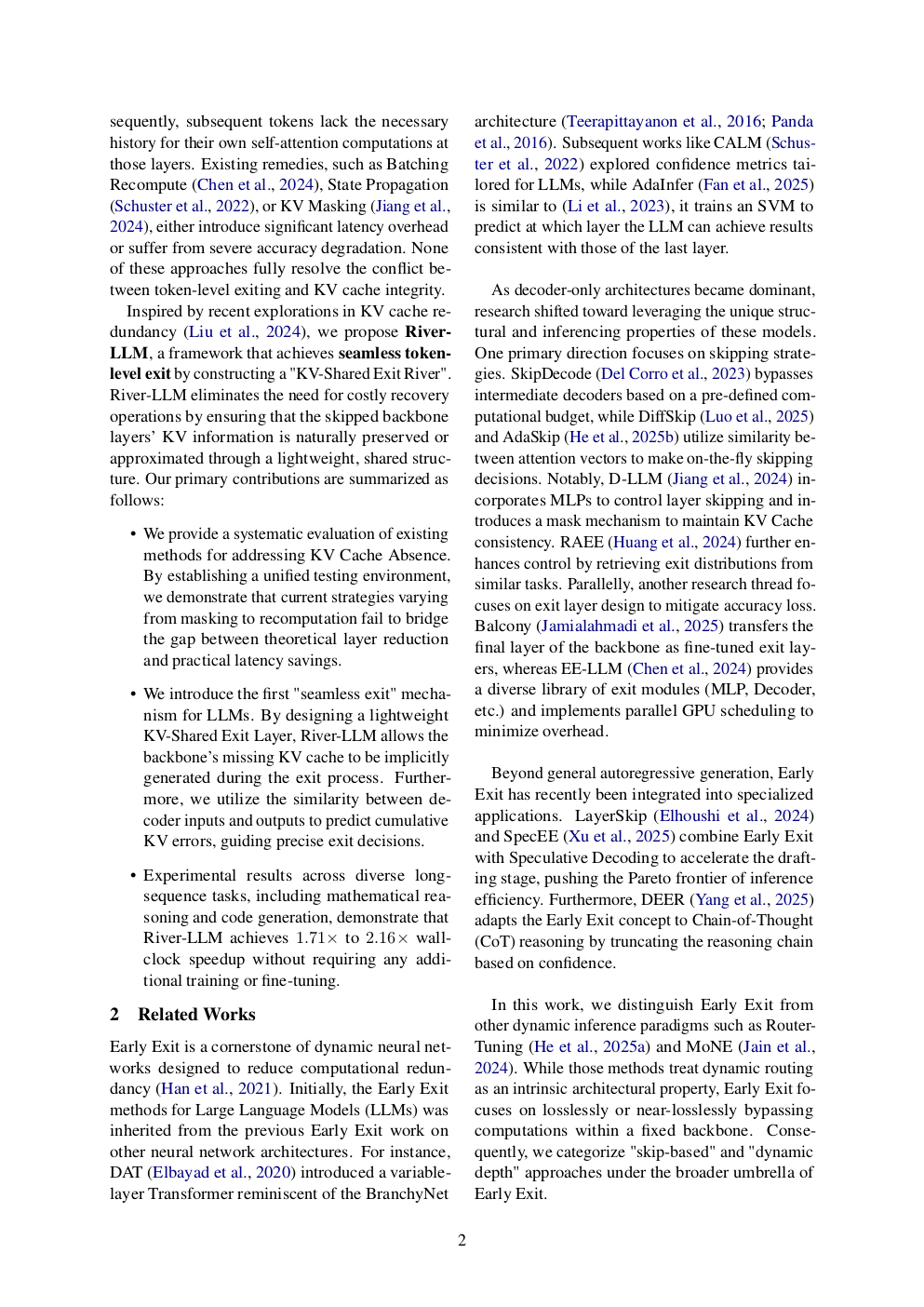

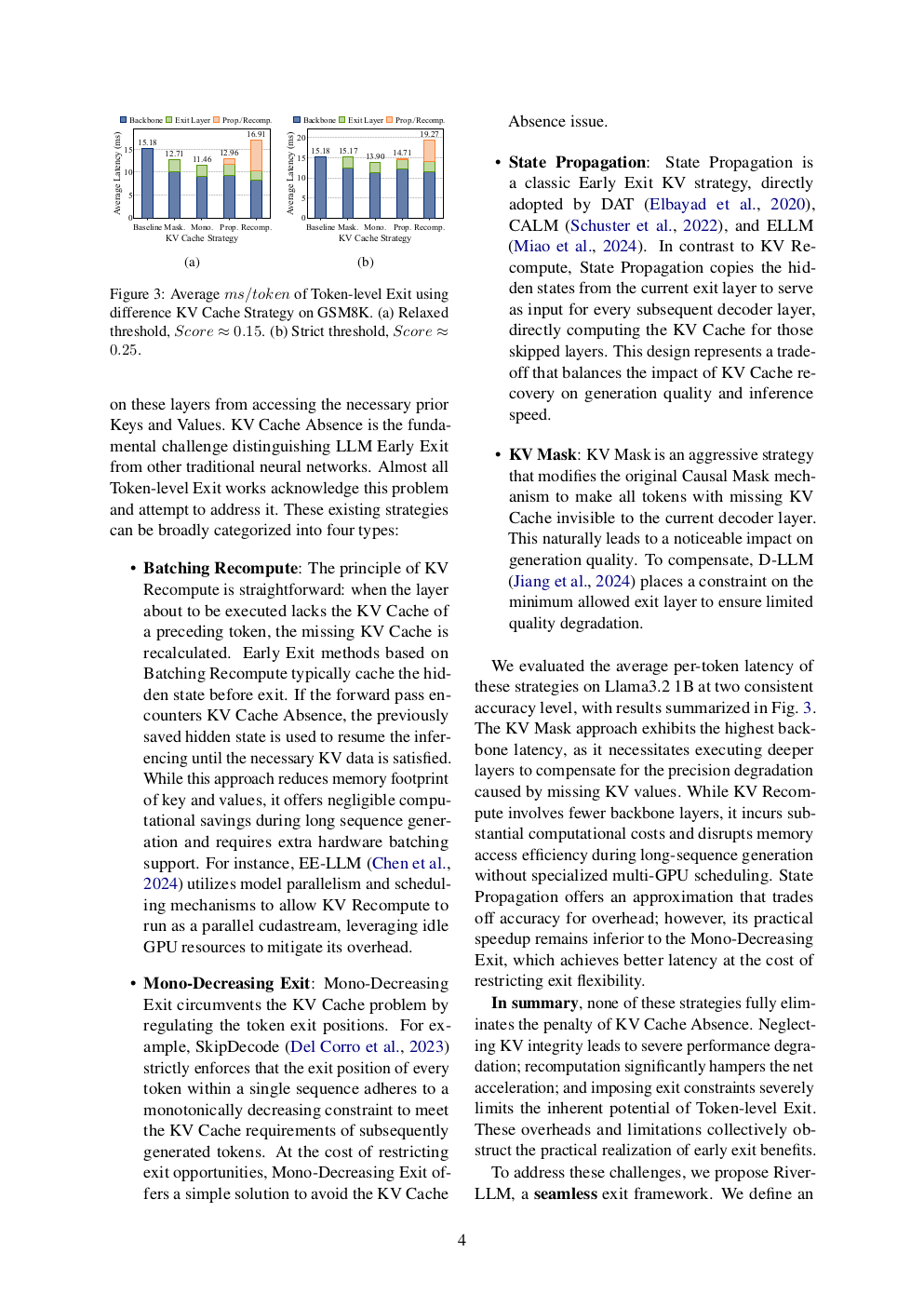

Figure 3: Page 4 (rendered)

Original abstract

Large Language Models (LLMs) have demonstrated exceptional performance across diverse domains but are increasingly constrained by high inference latency. Early Exit has emerged as a promising solution to accelerate inference by dynamically bypassing redundant layers. However, in decoder-only architectures, the efficiency of Early Exit is severely bottlenecked by the KV Cache Absence problem, where skipped layers fail to provide the necessary historical states for subsequent tokens. Existing solutions, such as recomputation or masking, either introduce significant latency overhead or incur severe precision loss, failing to bridge the gap between theoretical layer reduction and practical wall-clock speedup. In this paper, we propose River-LLM, a training-free framework that enables seamless token-level Early Exit. River-LLM introduces a lightweight KV-Shared Exit River that allows the backbone’s missing KV cache to be naturally generated and preserved during the exit process, eliminating the need for costly recovery operations. Furthermore, we utilize state transition similarity within decoder blocks to predict cumulative KV errors and guide precise exit decisions. Extensive experiments on mathematical reasoning and code generation tasks demonstrate that River-LLM achieves 1.71 to 2.16 times of practical speedup while maintaining high generation quality.