arXiv: 2604.19856 · PDF

Authors: Cagri Eryilmaz

Primary category: cs.AR · all: cs.AI, cs.AR, cs.LG

Matched keywords: large language model, llm, agent, agentic, multi-agent, retrieval, rag, reasoning

TL;DR

ChipCraftBrain is a multi-agent RTL generation framework combining PPO-driven orchestration, symbolic-neural reasoning, and knowledge retrieval. It hits 97.2% pass@1 on VerilogEval-Human and 94.7% on a 302-problem CVDP subset, outperforming MAGE and matching ChipAgents while using far fewer attempts than NVIDIA’s ACE-RTL.

Key Ideas

- Adaptive orchestration of six specialized agents via a PPO policy over a 168-dim state (with an MPC world-model alternative).

- Hybrid symbolic-neural architecture: algorithmic solvers for K-maps/truth tables, neural agents for waveforms and general RTL.

- Knowledge-augmented retrieval from 321 patterns + 971 open-source reference implementations with focus-aware lookup.

- Hierarchical spec decomposition into dependency-ordered sub-modules with interface synchronization.

Approach

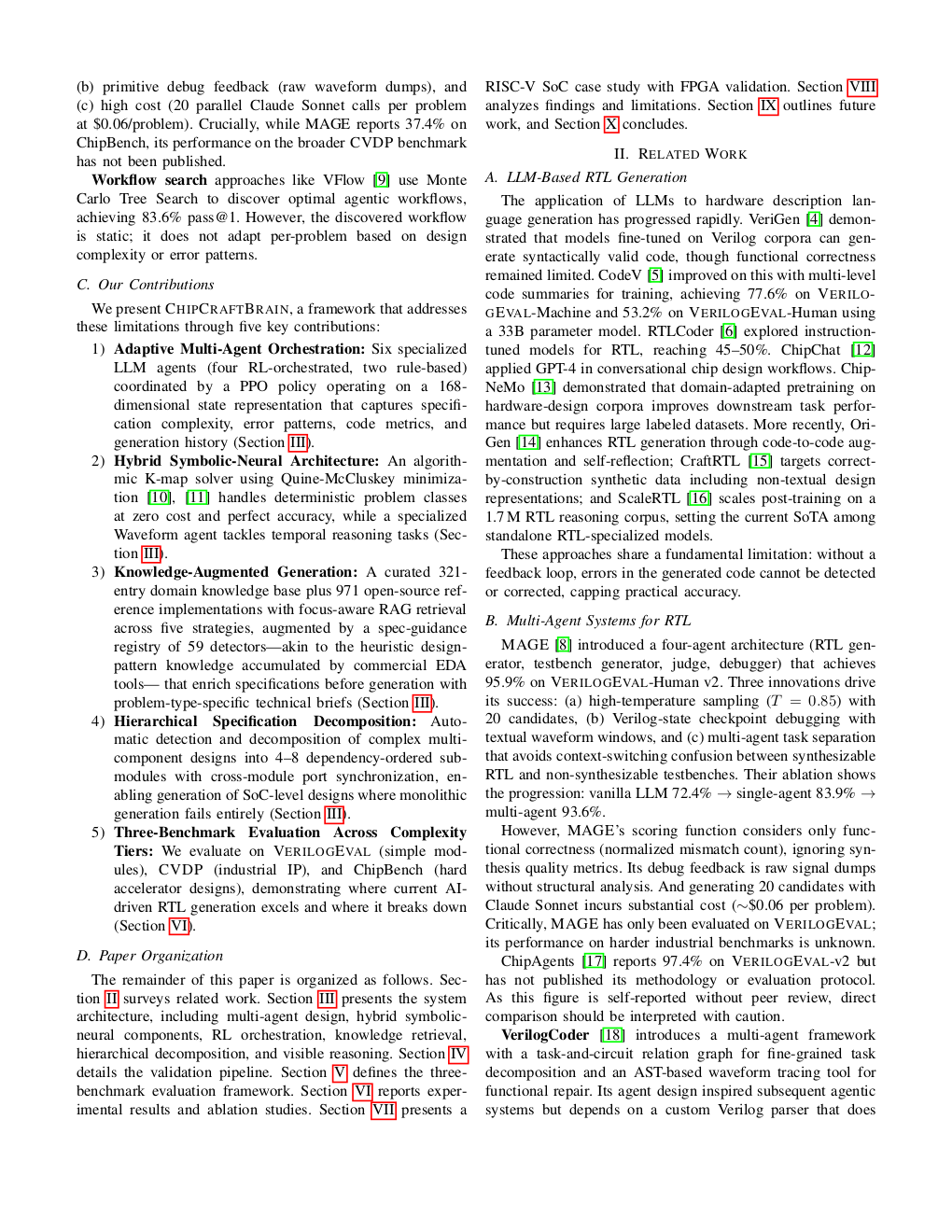

A controller learns (PPO) to route tasks among six agents depending on problem state. Symbolic solvers handle combinational logic exactly; neural agents handle timing/waveforms. A retrieval module injects reference patterns. Complex specs are decomposed hierarchically with cross-module interface synchronization before code generation and validation.

Experiments

- VerilogEval-Human: pass@1, 7 runs.

- CVDP non-agentic subset: 302 problems, 5 task categories, 3 runs; compared with single-shot baseline and NVIDIA’s ACE-RTL.

- RISC-V SoC case study: 8-module hierarchical decomposition, lint + FPGA validation.

- Baselines: MAGE, ChipAgents (self-reported), ACE-RTL, published single-shot.

Results

- VerilogEval-Human: 97.2% mean pass@1 (96.15-98.72%), best 154/156; ties ChipAgents, beats MAGE 95.9%.

- CVDP subset: 94.7% (286/302), +36-60 pp per category over single-shot; leads 3/4 shared categories with ACE-RTL at ~30× fewer attempts.

- RISC-V SoC: 8/8 lint-passing modules (689 LOC), FPGA-validated; monolithic baseline fails outright.

Why It Matters

Pushes LLM-driven RTL design from toy benchmarks toward industrial viability (CVDP, real SoC), showing that symbolic offloading plus RL-based orchestration can both raise accuracy and cut API cost—relevant for agent designers budgeting compute and infra teams exploring chip design automation.

Connections to Prior Work

- Multi-agent RTL: MAGE, ChipAgents, NVIDIA ACE-RTL.

- Benchmarks: VerilogEval, CVDP.

- Symbolic-neural hybrids for program synthesis.

- PPO-based agent orchestration and MPC/world-model planning.

- Retrieval-augmented code generation.

Open Questions

- How robust is the PPO policy under distribution shift to unseen IP styles?

- Performance on agentic CVDP tasks (explicitly excluded here) and larger SoCs beyond RISC-V.

- Cost/latency breakdown vs. MAGE and ChipAgents at equal budgets.

- Sensitivity to the 321+971 knowledge base—does it generalize without curated patterns?

- Independent reproduction of ChipAgents’ self-reported 97.4%.

Figures

Figure 1: Page 2 (rendered)

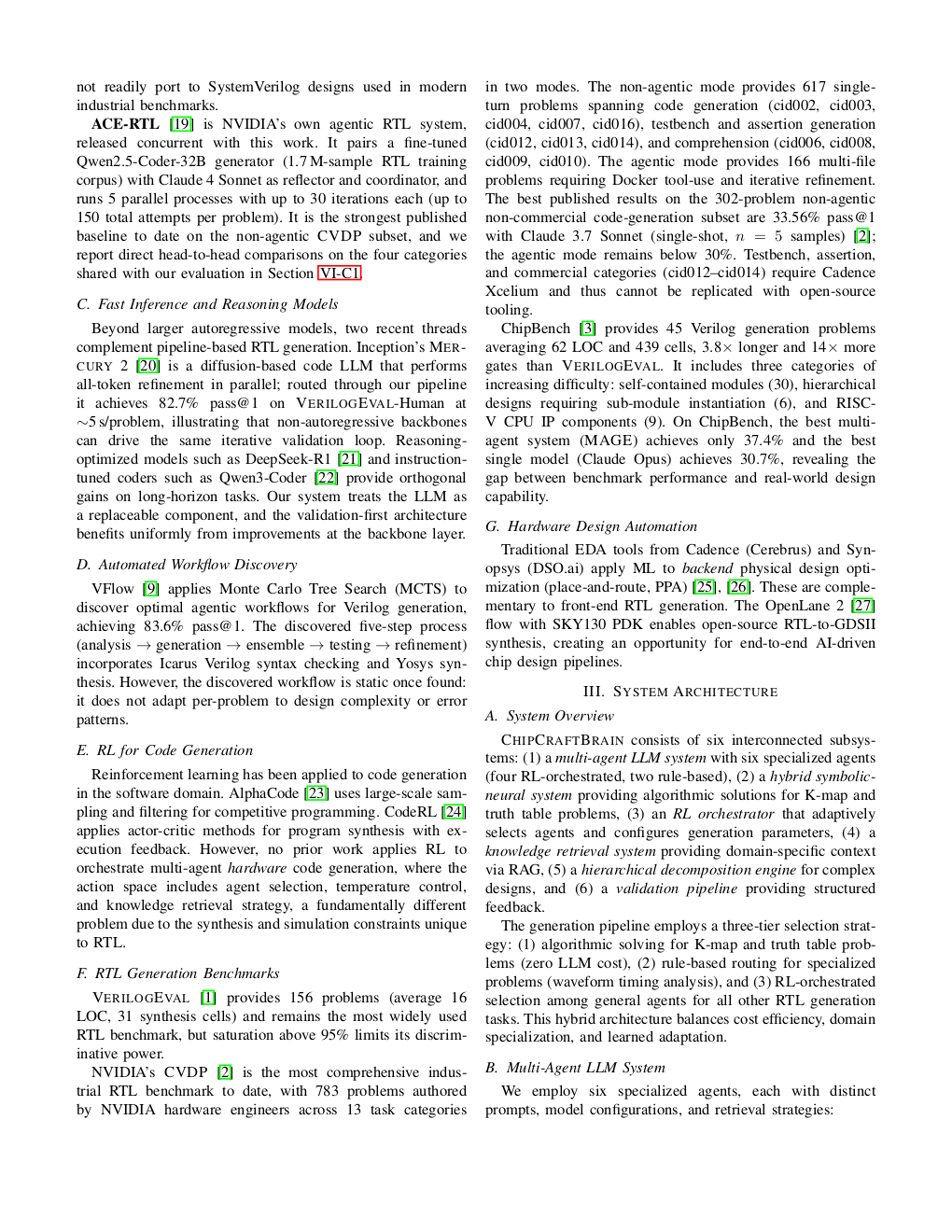

Figure 2: Page 3 (rendered)

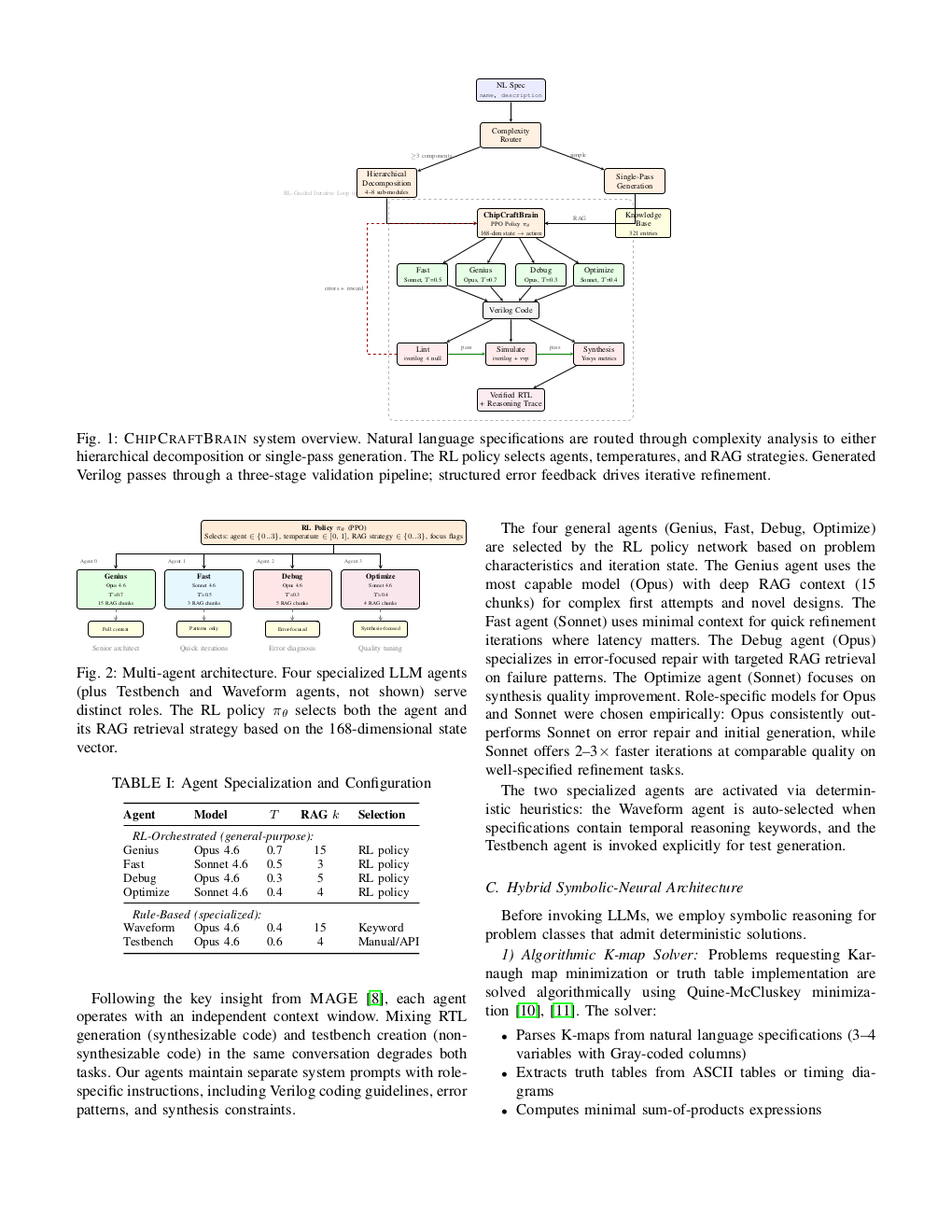

Figure 3: Page 4 (rendered)

Original abstract

Large Language Models (LLMs) show promise for generating Register-Transfer Level (RTL) code from natural language specifications, but single-shot generation achieves only 60-65% functional correctness on standard benchmarks. Multi-agent approaches such as MAGE reach 95.9% on VerilogEval yet remain untested on harder industrial benchmarks such as NVIDIA’s CVDP, lack synthesis awareness, and incur high API costs. We present ChipCraftBrain, a framework combining symbolic-neural reasoning with adaptive multi-agent orchestration for automated RTL generation. Four innovations drive the system: (1) adaptive orchestration over six specialized agents via a PPO policy over a 168-dim state (an alternative world-model MPC planner is also evaluated); (2) a hybrid symbolic-neural architecture that solves K-map and truth-table problems algorithmically while specialized agents handle waveform timing and general RTL; (3) knowledge-augmented generation from a 321-pattern base plus 971 open-source reference implementations with focus-aware retrieval; and (4) hierarchical specification decomposition into dependency-ordered sub-modules with interface synchronization. On VerilogEval-Human, ChipCraftBrain achieves 97.2% mean pass@1 (range 96.15-98.72% across 7 runs, best 154/156), on par with ChipAgents (97.4%, self-reported) and ahead of MAGE (95.9%). On a 302-problem non-agentic subset of CVDP spanning five task categories, we reach 94.7% mean pass@1 (286/302, averaged over 3 runs), a 36-60 percentage-point lift per category over the published single-shot baseline; we additionally lead three of four categories shared with NVIDIA’s ACE-RTL despite using roughly 30x fewer per-problem attempts. A RISC-V SoC case study demonstrates hierarchical decomposition generating 8/8 lint-passing modules (689 LOC) validated on FPGA, where monolithic generation fails entirely.