arXiv: 2604.21264 · PDF

Authors: Minping Chen, Bing Xu, Zulong Chen, Chuanfei Xu, Ying Zhou, Zui Tao, Zeyi Wen

Primary category: cs.AI · all: cs.AI

Matched keywords: large language model, llm, rag, chain-of-thought, mixture of experts, moe

TL;DR

The paper proposes an LLM-enhanced Person-Job Fit (PJF) system combining chain-of-thought data augmentation for low-quality job descriptions with a category-aware Mixture of Experts module to better distinguish similar candidate-job pairs, yielding measurable gains in offline metrics and online A/B tests.

Key Ideas

- LLM-based data augmentation using chain-of-thought prompts to polish and rewrite low-quality job descriptions.

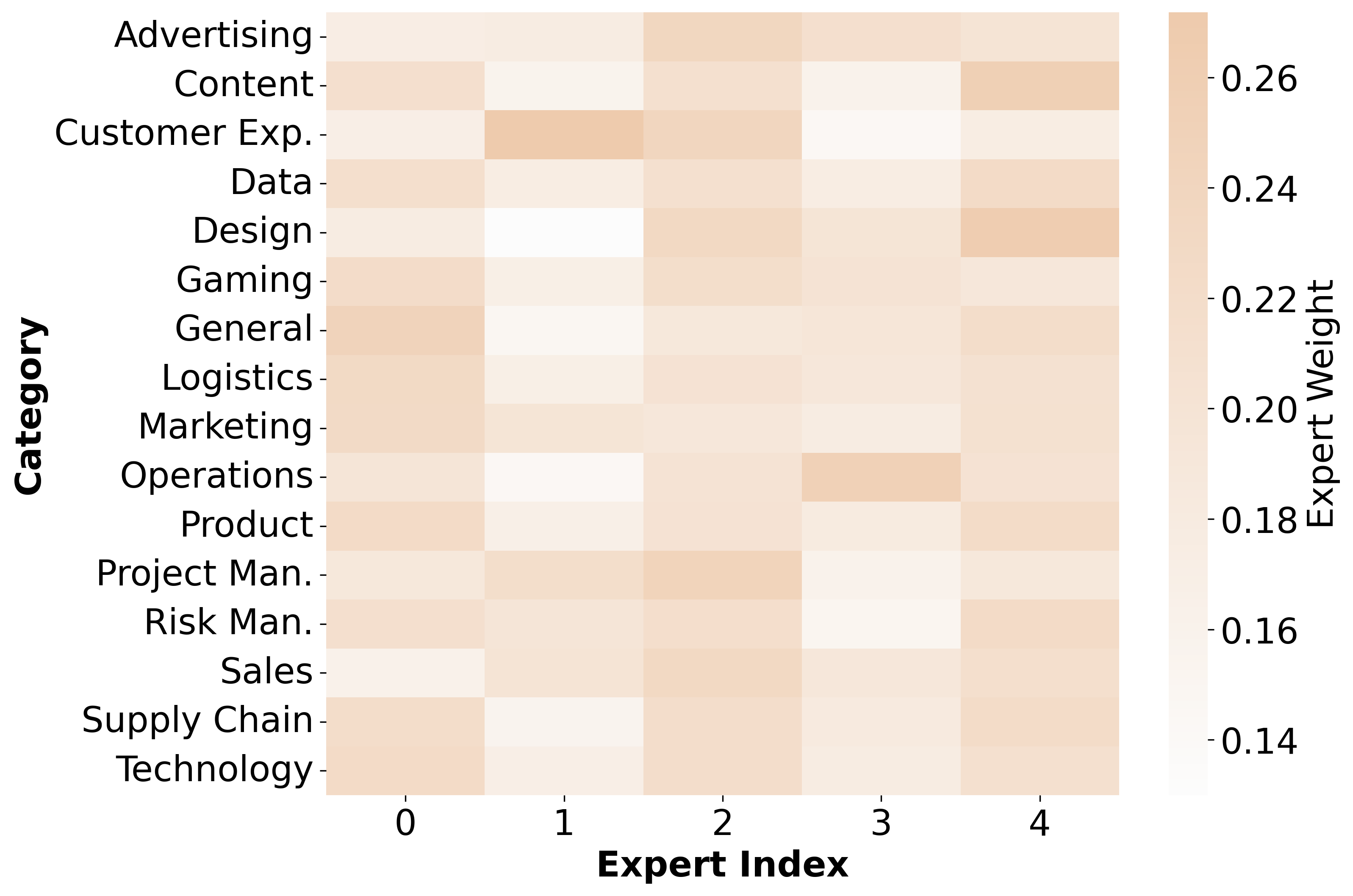

- Category-aware MoE that uses category embeddings to dynamically weight experts.

- MoE learns more discriminative patterns for similar (hard-to-distinguish) candidate-job pairs.

- Validated both offline and in production A/B tests with business-impact metrics.

Approach

The pipeline has two components. First, an LLM rewrites low-quality job descriptions via CoT prompting, producing cleaner, more informative inputs for downstream matching. Second, a category-aware MoE routes candidate-job pairs through specialized experts; category embeddings dynamically modulate expert gating weights, encouraging each expert to specialize on patterns relevant to specific job/candidate categories, thereby sharpening separability among near-duplicate pairs.

Experiments

Evaluation is performed on the authors’ proprietary recruitment platform. Offline metrics are AUC and GAUC against existing PJF baselines. Online evaluation is an A/B test measuring click-through conversion rate (CTCVR) and downstream business cost (external headhunting expenses). Specific datasets, baselines, and ablation details are not disclosed in the abstract.

Results

Relative improvements: +2.40% AUC and +7.46% GAUC offline. Online A/B tests show +19.4% CTCVR uplift and reported savings of millions of CNY in headhunting fees. The abstract does not report per-component ablations, so the marginal contribution of augmentation vs. MoE is unclear from the abstract alone.

Why It Matters

Demonstrates a practical recipe for integrating LLMs into production ranking/matching systems: use LLMs offline for data cleaning rather than online inference, and pair with lightweight specialized architectures (MoE) for the discrimination problem. Useful template for recommender and two-sided matching infra teams where data quality and hard-negative similarity are bottlenecks.

Connections to Prior Work

Builds on Person-Job Fit literature (PJFNN, BPJFNN, APJFNN), Mixture-of-Experts routing (MMoE, PLE) common in recommendation multi-task learning, and the growing line of LLM-driven data augmentation / rewriting (e.g., CoT-based synthetic data, LLM-as-annotator). Similar in spirit to category/domain-aware gating approaches in multi-domain recommendation.

Open Questions

- What are the offline baselines and ablation contributions of each component?

- How robust is LLM rewriting to hallucination that could misrepresent job requirements?

- Latency, cost, and refresh cadence of LLM augmentation at scale?

- Does the method generalize beyond this platform’s category taxonomy and Chinese-language corpus?

- Fairness implications of rewriting job descriptions (bias amplification).

Figures

Figure 1: Figure 1 (extracted from PDF)

Original abstract

Person-Job Fit (PJF) is a critical component for online recruitment. Existing approaches face several challenges, particularly in handling low-quality job descriptions and similar candidate-job pairs, which impair model performance. To address these challenges, this paper proposes a large language model (LLM) based method with two novel techniques: (1) LLM-based data augmentation, which polishes and rewrites low-quality job descriptions by leveraging chain-of-thought (COT) prompts, and (2) category-aware Mixture of Experts (MoE) that assists in identifying similar candidate-job pairs. This MoE module incorporates category embeddings to dynamically assign weights to the experts and learns more distinguishable patterns for similar candidate-job pairs. We perform offline evaluations and online A/B tests on our recruitment platform. Our method relatively surpasses existing methods by 2.40% in AUC and 7.46% in GAUC, and boosts click-through conversion rate (CTCVR) by 19.4% in online tests, saving millions of CNY in external headhunting expenses.