arXiv: 2604.22748 · PDF

Authors: Meng Chu, Xuan Billy Zhang, Kevin Qinghong Lin, Lingdong Kong, Jize Zhang, Teng Tu, Weijian Ma, Ziqi Huang, Senqiao Yang, Wei Huang, Yeying Jin, Zhefan Rao, Jinhui Ye, Xinyu Lin, Xichen Zhang, Qisheng Hu, Shuai Yang, Leyang Shen, Wei Chow, Yifei Dong, Fengyi Wu, Quanyu Long, Bin Xia, Shaozuo Yu, Mingkang Zhu, Wenhu Zhang, Jiehui Huang, Haokun Gui, Haoxuan Che, Long Chen, Qifeng Chen, Wenxuan Zhang, Wenya Wang, Xiaojuan Qi, Yang Deng, Yanwei Li, Mike Zheng Shou, Zhi-Qi Cheng, See-Kiong Ng, Ziwei Liu, Philip Torr, Jiaya Jia

Primary category: cs.AI · all: cs.AI

Matched keywords: agent, agentic, multi-agent, ai system

TL;DR

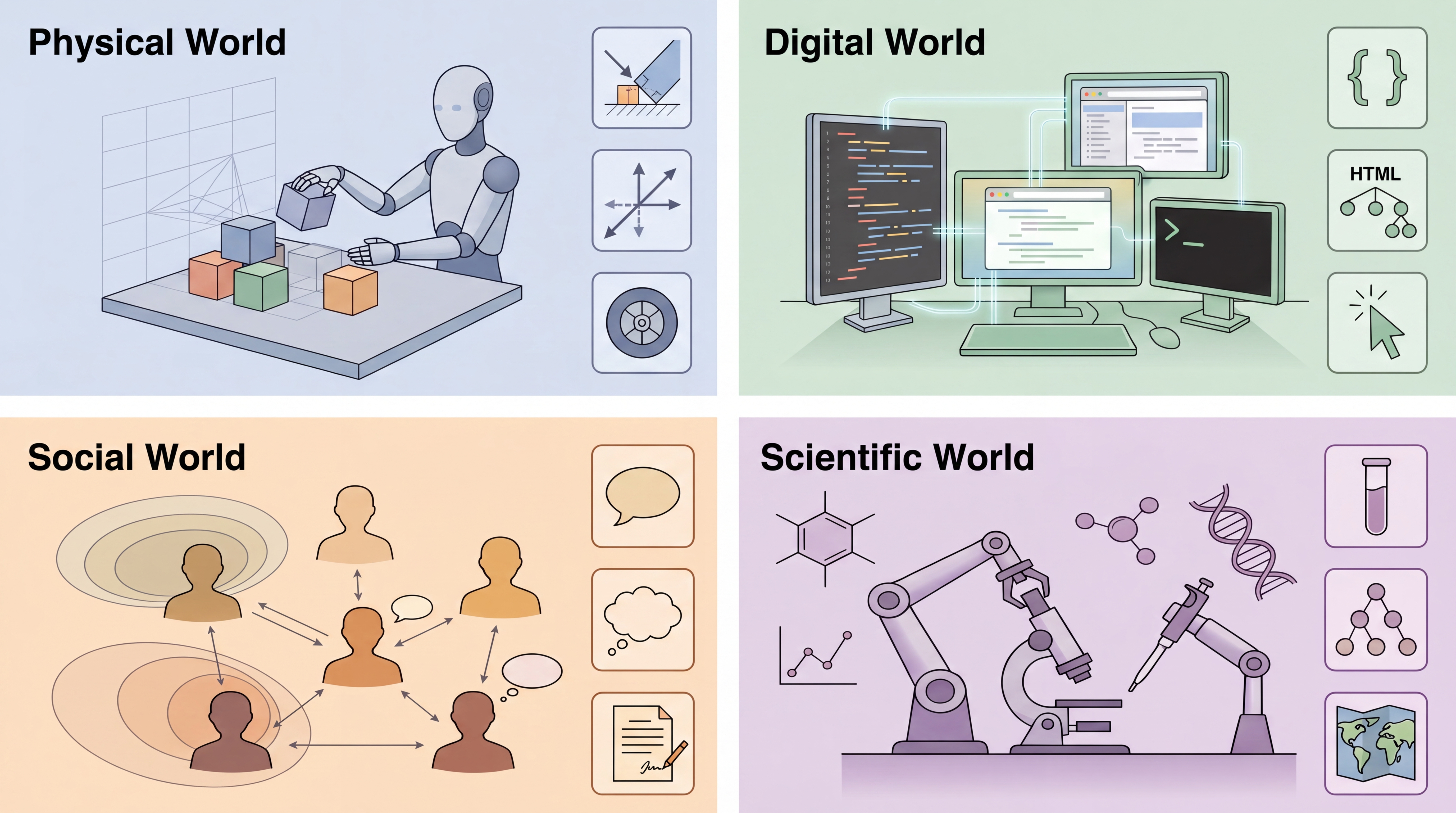

A survey proposing a “levels × laws” taxonomy for agentic world models: three capability tiers (Predictor, Simulator, Evolver) crossed with four law regimes (physical, digital, social, scientific). It synthesises 400+ works and 100+ systems, offering decision-centric evaluation principles and a roadmap.

Key Ideas

- Unify divergent “world model” definitions under one taxonomy.

- Levels: L1 Predictor (one-step transitions), L2 Simulator (multi-step rollouts), L3 Evolver (self-revising models).

- Laws: physical, digital, social, scientific — each dictates constraints and failure modes.

- Proposes decision-centric evaluation over passive next-step accuracy.

- Provides a minimal reproducible evaluation package.

Approach

This is a survey/position paper. Authors classify existing systems into level × regime cells, analyse methodology, failure modes, and evaluation practice within each cell, then distil architectural guidance and open problems. No new model is trained.

This is a survey/position paper. Authors classify existing systems into level × regime cells, analyse methodology, failure modes, and evaluation practice within each cell, then distil architectural guidance and open problems. No new model is trained.

Experiments

No empirical experiments. The paper aggregates results from 400+ prior works covering model-based RL, video generation (e.g., diffusion world models), web/GUI agents, multi-agent social simulation, and AI-for-science systems. “Evaluation” here means meta-analysis of how each community measures success.

Results

Headline finding: current world models cluster at L1–L2 within single regimes; L3 (self-evolving) is largely aspirational. Cross-regime generalisation is weak, and evaluation is fragmented — next-token/next-frame metrics poorly predict downstream decision quality. Claims are framing/synthesis rather than benchmarked.

Headline finding: current world models cluster at L1–L2 within single regimes; L3 (self-evolving) is largely aspirational. Cross-regime generalisation is weak, and evaluation is fragmented — next-token/next-frame metrics poorly predict downstream decision quality. Claims are framing/synthesis rather than benchmarked.

Why It Matters

Gives practitioners a shared vocabulary to situate a “world model” project, pick appropriate evaluations, and spot missing capabilities. Useful for agent builders choosing between video-generation, simulator-style, or learned-dynamics backbones, and for infra teams planning evaluation harnesses that go beyond perplexity.

Connections to Prior Work

Builds on Ha & Schmidhuber’s world models, Dreamer/MuZero model-based RL, Sora-style video generative models, LLM-based web/GUI agents (WebArena, SeeAct), generative agent social simulations (Park et al.), and AI-for-science systems (AlphaFold, autonomous labs). Echoes Sutton’s “bitter lesson” and recent embodied-AI surveys.

Open Questions

- Concrete benchmarks distinguishing L2 from L3 behaviour.

- How to measure cross-regime transfer (e.g., physical → digital).

- What architectures actually support online self-revision without catastrophic forgetting.

- Governance: who audits an Evolver that rewrites its own dynamics?

- Whether scaling alone closes the predictor→evolver gap, or new inductive biases are required.

Original abstract

As AI systems move from generating text to accomplishing goals through sustained interaction, the ability to model environment dynamics becomes a central bottleneck. Agents that manipulate objects, navigate software, coordinate with others, or design experiments require predictive environment models, yet the term world model carries different meanings across research communities. We introduce a “levels x laws” taxonomy organized along two axes. The first defines three capability levels: L1 Predictor, which learns one-step local transition operators; L2 Simulator, which composes them into multi-step, action-conditioned rollouts that respect domain laws; and L3 Evolver, which autonomously revises its own model when predictions fail against new evidence. The second identifies four governing-law regimes: physical, digital, social, and scientific. These regimes determine what constraints a world model must satisfy and where it is most likely to fail. Using this framework, we synthesize over 400 works and summarize more than 100 representative systems spanning model-based reinforcement learning, video generation, web and GUI agents, multi-agent social simulation, and AI-driven scientific discovery. We analyze methods, failure modes, and evaluation practices across level-regime pairs, propose decision-centric evaluation principles and a minimal reproducible evaluation package, and outline architectural guidance, open problems, and governance challenges. The resulting roadmap connects previously isolated communities and charts a path from passive next-step prediction toward world models that can simulate, and ultimately reshape, the environments in which agents operate.