arXiv: 2604.22085 · PDF

Authors: Seyed Moein Abtahi, Rasa Rahnema, Hetkumar Patel, Neel Patel, Majid Fekri, Tara Khani

Affiliations: Moorcheh AI, EdgeAI Innovations

Primary category: cs.AI · all: cs.AI

Matched keywords: large language model, agent, agentic, retrieval, inference, latency

TL;DR

Memanto is a universal memory layer for long-horizon agents that replaces hybrid knowledge-graph pipelines with a typed semantic schema plus Moorcheh’s information-theoretic search, hitting 89.8% on LongMemEval and 87.1% on LoCoMo with sub-90 ms single-query retrieval and zero ingestion cost.

Key Ideas

- Knowledge-graph complexity is not required for high-fidelity agent memory.

- Typed semantic memory with 13 predefined categories replaces LLM-mediated entity extraction.

- Automated conflict resolution and temporal versioning handle multi-session state.

- Information-theoretic, no-indexing retrieval yields deterministic sub-90 ms latency.

- Single-query retrieval beats multi-query hybrid pipelines on accuracy and cost.

Approach

Memanto defines a fixed schema of thirteen memory categories into which observations are slotted, avoiding schema maintenance and graph construction. A conflict resolution mechanism plus temporal versioning reconcile updates across sessions. Retrieval runs through Moorcheh’s Information-Theoretic Search engine — a no-indexing semantic database that eliminates ingestion delay and returns deterministic results in under ninety milliseconds via a single query, rather than multi-query graph traversals.

Experiments

Benchmarks: LongMemEval and LoCoMo long-horizon memory evaluation suites. Baselines: hybrid semantic-graph and vector-based memory systems (unnamed in abstract). Metric: accuracy. A five-stage progressive ablation quantifies each component’s contribution (schema, conflict resolution, versioning, retrieval engine, query strategy).

Results

State-of-the-art accuracy: 89.8% on LongMemEval, 87.1% on LoCoMo, surpassing all evaluated hybrid-graph and vector baselines. Achieved with a single retrieval query, no ingestion cost, sub-90 ms latency, and lower operational complexity. Ablations reportedly isolate each component’s contribution, though specific deltas are not disclosed in the abstract.

Why It Matters

For agent and infra builders, Memanto argues that production memory can be simpler, cheaper, and faster than graph-based stacks like Zep or Mem0-style pipelines — no entity extraction LLM calls, no graph upkeep, deterministic latency. This lowers the bar for multi-session agents and removes ingestion-time bottlenecks.

Connections to Prior Work

Positioned against hybrid semantic-graph memory (Zep, Graphiti-style systems), vector-DB retrieval memory (Mem0, MemGPT-adjacent), and long-horizon memory benchmarking (LongMemEval, LoCoMo). The typed-schema stance echoes slot-filling and frame semantics; the retrieval engine builds on information-theoretic similarity search rather than ANN indices.

Open Questions

- What are the 13 categories, and how robust is the fixed schema to out-of-distribution domains?

- How does Moorcheh’s information-theoretic search scale to millions of memories?

- Per-component ablation numbers and failure modes are unspecified in the abstract.

- Behaviour under adversarial or contradictory updates beyond the built-in conflict resolver.

- Baseline identities and whether comparisons used matched LLM backbones.

Figures

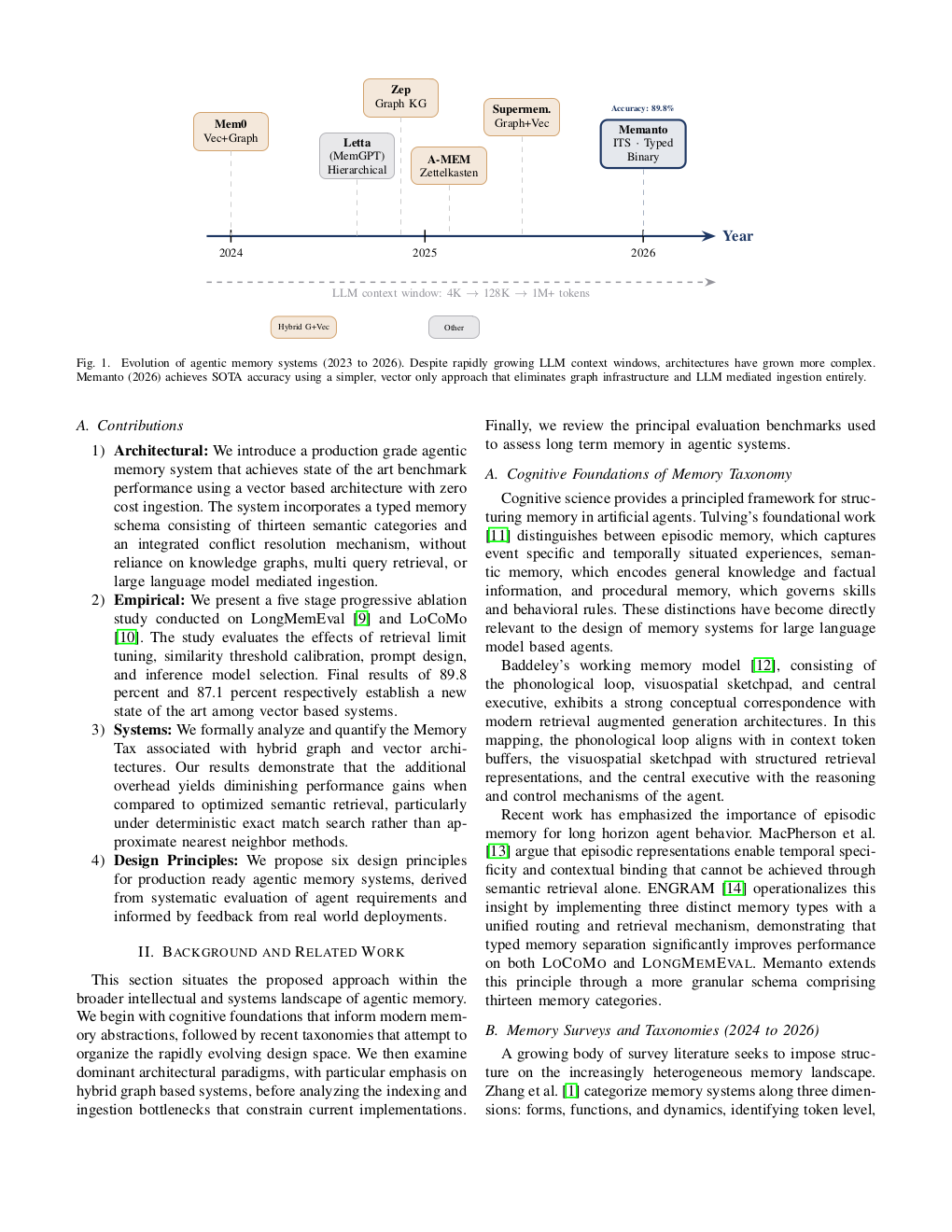

Figure 1: Page 2 (rendered)

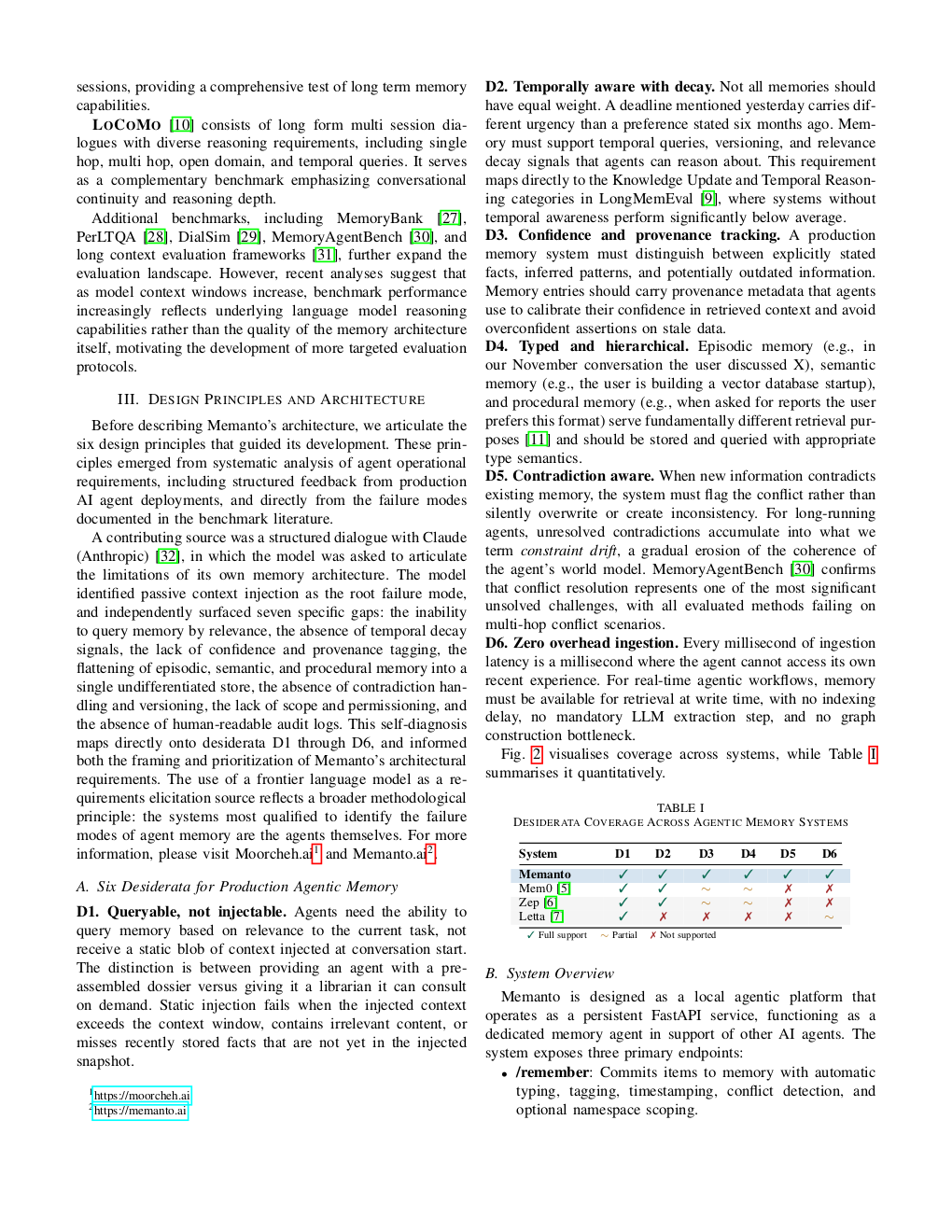

Figure 2: Page 3 (rendered)

Figure 3: Page 4 (rendered)

Original abstract

The transition from stateless language model inference to persistent, multi session autonomous agents has revealed memory to be a primary architectural bottleneck in the deployment of production grade agentic systems. Existing methodologies largely depend on hybrid semantic graph architectures, which impose substantial computational overhead during both ingestion and retrieval. These systems typically require large language model mediated entity extraction, explicit graph schema maintenance, and multi query retrieval pipelines. This paper introduces Memanto, a universal memory layer for agentic artificial intelligence that challenges the prevailing assumption that knowledge graph complexity is necessary to achieve high fidelity agent memory. Memanto integrates a typed semantic memory schema comprising thirteen predefined memory categories, an automated conflict resolution mechanism, and temporal versioning. These components are enabled by Moorcheh’s Information Theoretic Search engine, a no indexing semantic database that provides deterministic retrieval within sub ninety millisecond latency while eliminating ingestion delay. Through systematic benchmarking on the LongMemEval and LoCoMo evaluation suites, Memanto achieves state of the art accuracy scores of 89.8 percent and 87.1 percent respectively. These results surpass all evaluated hybrid graph and vector based systems while requiring only a single retrieval query, incurring no ingestion cost, and maintaining substantially lower operational complexity. A five stage progressive ablation study is presented to quantify the contribution of each architectural component, followed by a discussion of the implications for scalable deployment of agentic memory systems.