arXiv: 2604.20022 · PDF

Authors: Yusuf Kesmen, Fay Elhassan, Jiayi Ma, Julien Stalhandske, David Sasu, Alexandra Kulinkina, Akhil Arora, Lars Klein, Mary-Anne Hartley

Primary category: cs.LG · all: cs.AI, cs.CL, cs.LG

Matched keywords: large language model, llm, agent, rag, reasoning, inference

TL;DR

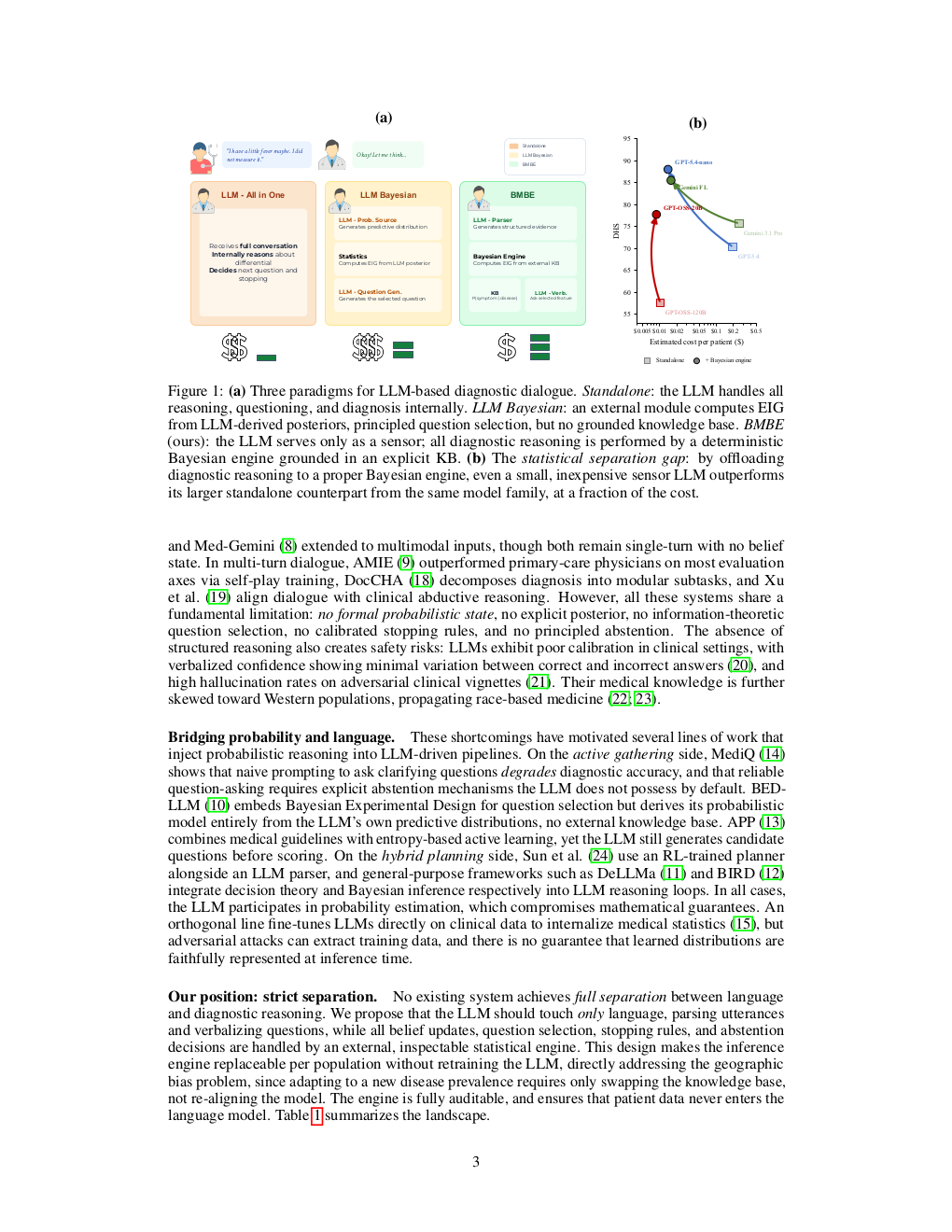

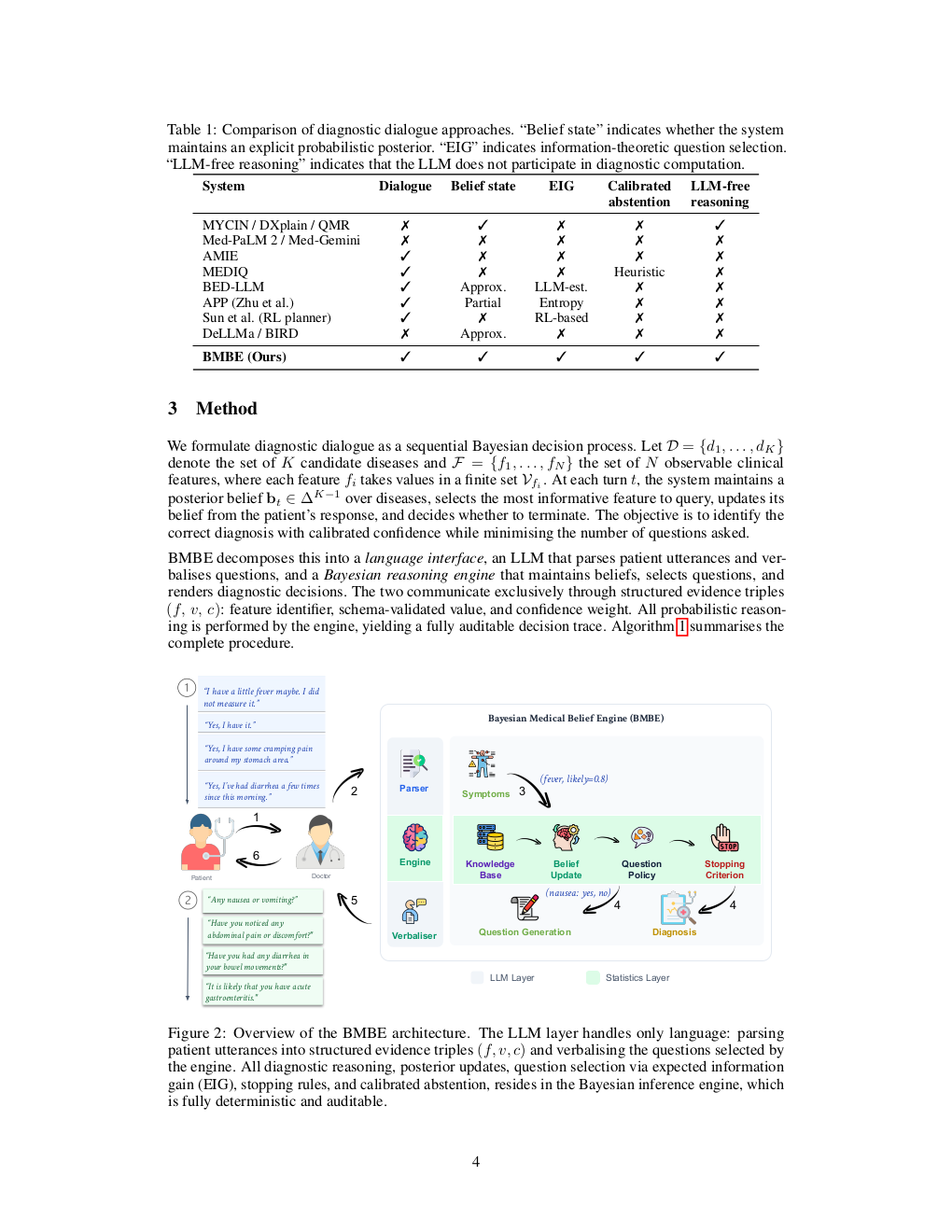

BMBE splits medical dialogue into an LLM “sensor” that parses utterances and a deterministic Bayesian engine that handles all diagnostic inference, yielding calibrated, private, and robust diagnosis that beats frontier standalone LLMs at a fraction of the cost.

Key Ideas

- LLMs conflate language understanding with probabilistic reasoning; this is an architectural flaw.

- Strict modular separation: LLM only parses/verbalises; Bayesian engine owns all inference.

- Patient data never enters the LLM → private by construction.

- Swappable statistical backend per population, no retraining required.

- Delivers calibrated selective diagnosis with tunable accuracy-coverage tradeoff.

- Claims a “statistical separation gap”: cheap-sensor + engine > frontier standalone LLM.

- Robust to adversarial/atypical patient communication styles.

Approach

BMBE (Bayesian Medical Belief Engine) uses an LLM purely as a sensor — extracting structured evidence from free-text patient replies and rendering follow-up questions in natural language. A deterministic, auditable Bayesian inference module maintains belief over diagnoses, selects next questions, and decides when to commit. The statistical backend is a standalone module, decoupled from the LLM’s weights.

Experiments

Evaluated against frontier standalone LLM “doctors” from the same family, using both empirical and LLM-generated medical knowledge bases. Metrics implied: diagnostic accuracy, coverage, calibration, cost, and robustness under adversarial patient communication styles. Specific datasets, model names, and numeric baselines are not stated in the abstract.

Results

Abstract reports qualitative findings: (1) calibrated selective diagnosis with a continuously adjustable accuracy-coverage curve; (2) a cheap LLM sensor + Bayesian engine outperforms a frontier same-family standalone model at much lower cost; (3) standalone LLM doctors collapse under adversarial phrasing while BMBE remains robust. No headline numbers are disclosed in the abstract.

Why It Matters

Shows that for high-stakes dialogue agents, pairing a small LLM with an explicit probabilistic backend can beat scaling alone — cheaper, auditable, privacy-preserving, and population-swappable. A concrete template for neurosymbolic medical/agentic systems where regulators demand traceable reasoning.

Connections to Prior Work

Neurosymbolic AI and tool-augmented LLMs; Bayesian diagnostic networks (QMR-DT, Internist-1); LLM-as-judge vs LLM-as-sensor framings; selective prediction / abstention literature; calibration work on medical LLMs (Med-PaLM, AMIE); prior critiques of LLMs as probabilistic reasoners (e.g., faithful CoT, Toolformer).

Open Questions

- How is the Bayesian knowledge base constructed and maintained at scale?

- Quantitative accuracy, calibration, and cost numbers vs named baselines?

- Does the sensor LLM introduce systematic parsing biases that corrupt the belief state?

- Scaling beyond diagnosis to triage, treatment, or multi-turn longitudinal care?

- Performance on real clinical transcripts versus simulated patients?

Figures

Figure 1: Page 2 (rendered)

Figure 2: Page 3 (rendered)

Figure 3: Page 4 (rendered)

Original abstract

Large language models are increasingly deployed as autonomous diagnostic agents, yet they conflate two fundamentally different capabilities: natural-language communication and probabilistic reasoning. We argue that this conflation is an architectural flaw, not an engineering shortcoming. We introduce BMBE (Bayesian Medical Belief Engine), a modular diagnostic dialogue framework that enforces a strict separation between language and reasoning: an LLM serves only as a sensor, parsing patient utterances into structured evidence and verbalising questions, while all diagnostic inference resides in a deterministic, auditable Bayesian engine. Because patient data never enters the LLM, the architecture is private by construction; because the statistical backend is a standalone module, it can be replaced per target population without retraining. This separation yields three properties no autonomous LLM can offer: calibrated selective diagnosis with a continuously adjustable accuracy-coverage tradeoff, a statistical separation gap where even a cheap sensor paired with the engine outperforms a frontier standalone model from the same family at a fraction of the cost, and robustness to adversarial patient communication styles that cause standalone doctors to collapse. We validate across empirical and LLM-generated knowledge bases against frontier LLMs, confirming the advantage is architectural, not informational.