arXiv: 2604.20183 · PDF

Authors: Xinyu Zhang, Yuchen Wan, Boxuan Zhang, Zesheng Yang, Lingling Zhang, Bifan Wei, Jun Liu

Primary category: cs.CL · all: cs.CL

Matched keywords: large language model, llm, agent, rag, reasoning, inference

TL;DR

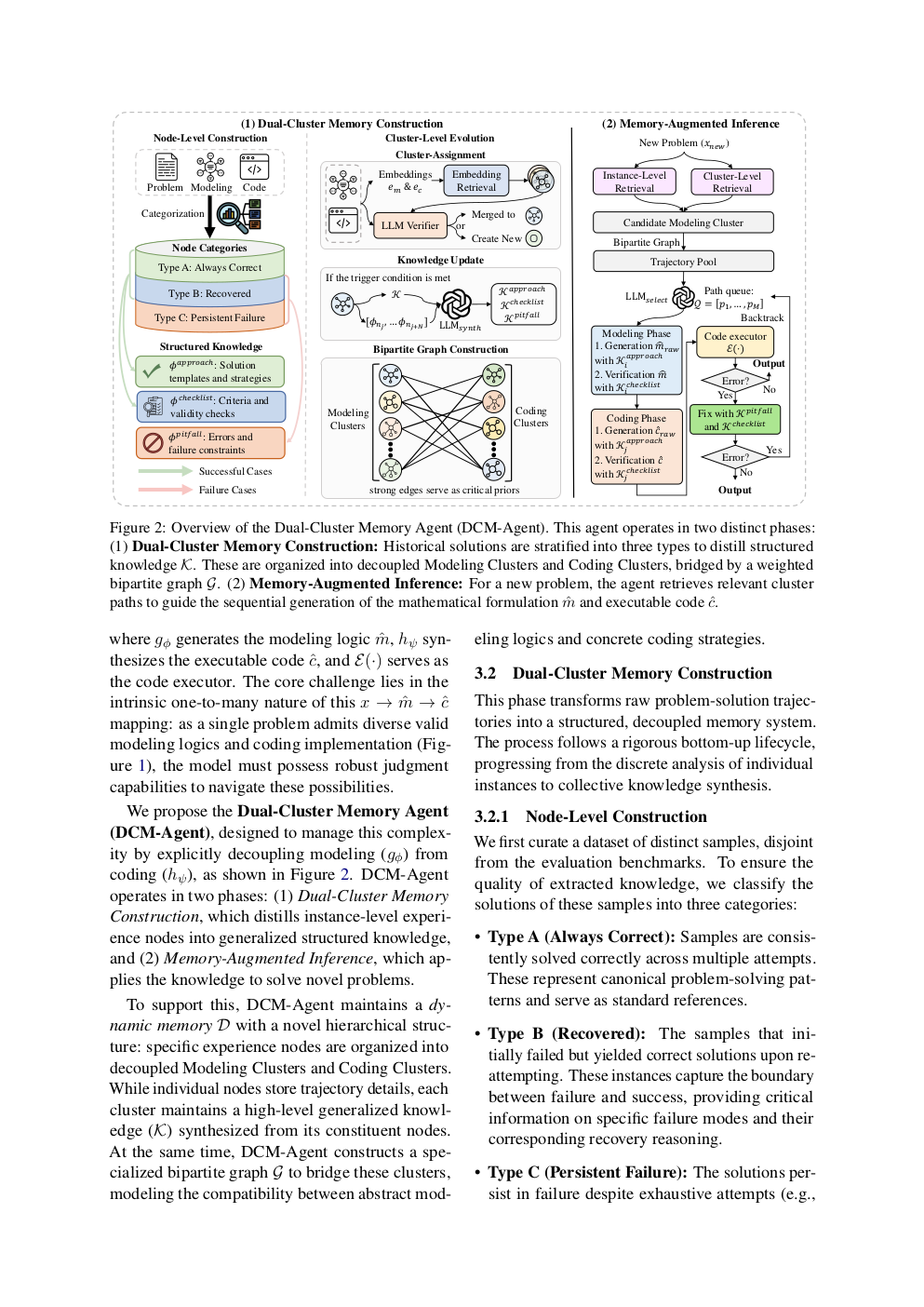

DCM-Agent is a training-free framework that resolves structural ambiguity in LLM-based optimization problem solving by maintaining dual clusters of historical solutions (modeling + coding), distilled into Approach/Checklist/Pitfall knowledge, and using them for memory-augmented inference.

Key Ideas

- Optimization problems suffer from multi-paradigm ambiguity that confuses LLMs.

- Split memory into two clusters: modeling and coding.

- Distill each cluster into three structured knowledge types: Approach, Checklist, Pitfall.

- Use memory at inference for path navigation, error repair, and adaptive switching.

- Observed “knowledge inheritance”: memory from larger models lifts smaller models.

Approach

The Dual-Cluster Memory Construction step routes prior solutions into modeling vs. coding clusters, then distills generalizable guidance into structured Approach / Checklist / Pitfall entries. At inference, the agent retrieves relevant memory to pick a reasoning path, detects and repairs errors, and adaptively switches paradigms. The entire pipeline is training-free, relying on prompting plus a structured memory bank.

Experiments

Evaluated across seven optimization benchmarks (specific names not listed in abstract) against presumably standard LLM agent baselines. Metric is solution performance/accuracy on optimization tasks. Also ablates cross-model memory transfer (large-model memory used by smaller models).

Results

Reports an average improvement of 11%–21% across the seven benchmarks. Highlights a knowledge inheritance effect where memory built by larger models boosts smaller models’ performance. Abstract does not give per-benchmark numbers or baseline identities, so magnitude claims cannot be fully verified from the abstract alone.

Why It Matters

Gives agent/LLM practitioners a training-free recipe for handling structurally ambiguous tasks where multiple valid modeling paradigms compete (e.g., OR, MILP, convex formulations). The dual-cluster + structured-distillation pattern is reusable for any domain with a model/code split, and the inheritance effect hints at cheap deployment: build memory once with a strong model, reuse with cheaper ones.

Connections to Prior Work

- Memory-augmented LLM agents (MemGPT, Reflexion, Generative Agents).

- Retrieval-augmented generation and case-based reasoning for code/math.

- LLMs for operations research / optimization modeling (Chain-of-Experts, OptiMUS).

- Self-refine / self-debug loops for error repair.

- Knowledge distillation and weak-to-strong / strong-to-weak transfer.

Open Questions

- Which benchmarks and baselines exactly, and how do per-task gains distribute?

- How is cluster assignment done, and how robust is it to noisy/incorrect history?

- Memory growth, retrieval latency, and scalability to very large corpora?

- Does inheritance hold across architectures/families, or only within one family?

- Generalization beyond optimization to other multi-paradigm domains (proofs, SQL, planning)?

Figures

Figure 1: Page 2 (rendered)

Figure 2: Page 3 (rendered)

Figure 3: Page 4 (rendered)

Original abstract

Large Language Models (LLMs) often struggle with structural ambiguity in optimization problems, where a single problem admits multiple related but conflicting modeling paradigms, hindering effective solution generation. To address this, we propose Dual-Cluster Memory Agent (DCM-Agent) to enhance performance by leveraging historical solutions in a training-free manner. Central to this is Dual-Cluster Memory Construction. This agent assigns historical solutions to modeling and coding clusters, then distills each cluster’s content into three structured types: Approach, Checklist, and Pitfall. This process derives generalizable guidance knowledge. Furthermore, this agent introduces Memory-augmented Inference to dynamically navigate solution paths, detect and repair errors, and adaptively switch reasoning paths with structured knowledge. The experiments across seven optimization benchmarks demonstrate that DCM-Agent achieves an average performance improvement of 11%- 21%. Notably, our analysis reveals a ``knowledge inheritance’’ phenomenon: memory constructed by larger models can guide smaller models toward superior performance, highlighting the framework’s scalability and efficiency.