arXiv: 2604.22750 · PDF

Authors: Longju Bai, Zhemin Huang, Xingyao Wang, Jiao Sun, Rada Mihalcea, Erik Brynjolfsson, Alex Pentland, Jiaxin Pei

Primary category: cs.CL · all: cs.CL, cs.CY, cs.HC, cs.SE

Matched keywords: llm, agent, agentic, rag, reasoning

TL;DR

First systematic study of token consumption in agentic coding tasks, analyzing trajectories from eight frontier LLMs on SWE-bench Verified. Finds agentic tasks consume 1000x more tokens than chat/reasoning, usage is highly stochastic, models vary dramatically in efficiency, and LLMs cannot reliably predict their own costs.

Key Ideas

- Agentic coding is uniquely expensive: ~1000x more tokens than code chat/reasoning, dominated by input tokens.

- Token usage is stochastic: same task varies up to 30x across runs; more tokens ≠ better accuracy.

- Model efficiency gaps are huge: Kimi-K2 and Claude-Sonnet-4.5 burn ~1.5M more tokens than GPT-5 on identical tasks.

- Human-rated difficulty only weakly correlates with actual agent token cost.

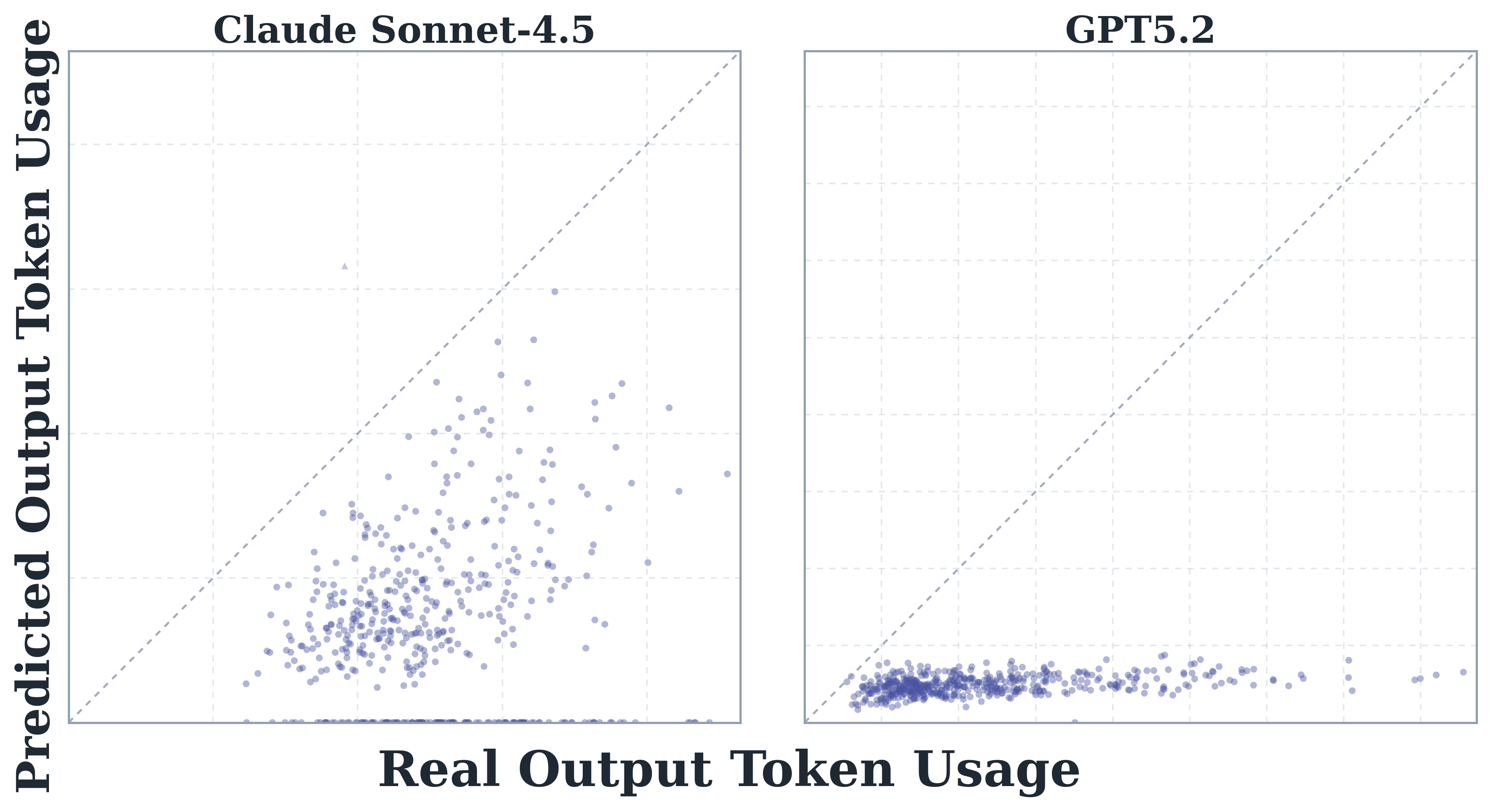

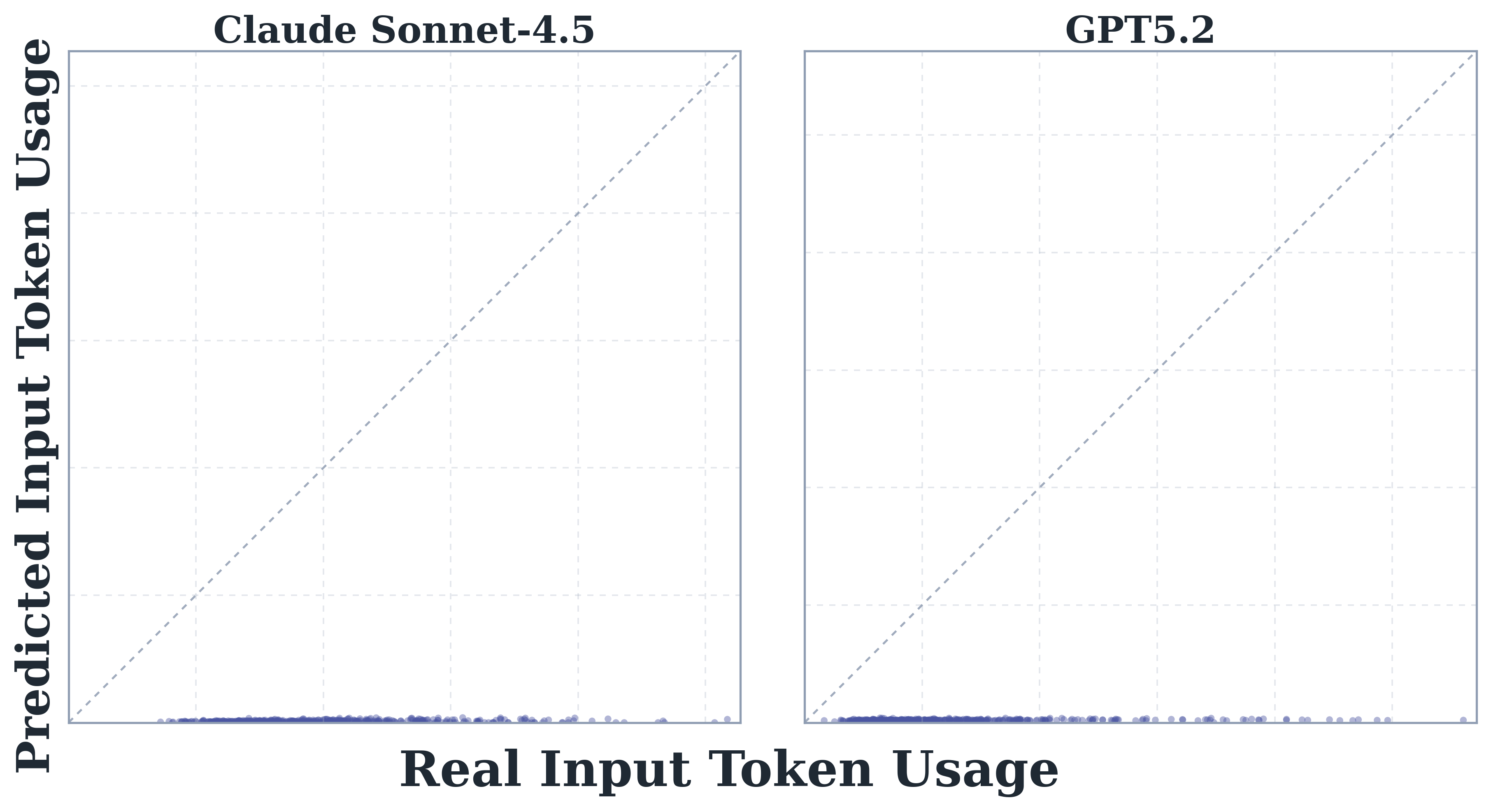

- Frontier LLMs are poor at self-predicting token usage (correlation ≤0.39) and systematically underestimate.

Approach

The authors collect agent trajectories from eight frontier LLMs running SWE-bench Verified, decomposing token spend across input/output, tool calls, and reasoning steps. They compare with non-agentic baselines (code chat, code reasoning). A prediction task asks each model to forecast its own total token cost before executing a task, measuring correlation and bias versus realized consumption.

Experiments

- Benchmark: SWE-bench Verified.

- Models: eight frontier LLMs including GPT-5, Claude-Sonnet-4.5, Kimi-K2.

- Baselines: code chat and code reasoning workloads for cost comparison.

- Metrics: input/output token counts, variance across repeated runs, accuracy-cost curves, Pearson/Spearman correlation of predicted vs actual tokens, human difficulty alignment.

Results

- Agentic workloads: ~1000x the token footprint of chat/reasoning, input-heavy.

- Per-task variance up to 30x across reruns of identical tasks.

- Accuracy peaks at intermediate token budgets and plateaus (or regresses) with more spend.

- GPT-5 is the most token-efficient among tested; Kimi-K2 and Claude-Sonnet-4.5 average ~1.5M extra tokens per task.

- Self-prediction correlation tops out at 0.39; models underestimate systematically.

Why It Matters

For agent operators, this reframes cost modeling: budgeting can’t rely on human difficulty ratings or the agent’s own estimates, and throwing more tokens at hard tasks doesn’t monotonically help. Infra teams should plan for high variance, input-token–dominated spend, and pick models on efficiency Pareto frontiers, not raw capability.

Connections to Prior Work

Extends SWE-bench evaluation literature (SWE-bench, SWE-agent) and agent scaffolding studies; complements scaling-law and inference-cost analyses. Related to self-estimation / calibration work in LLMs and to emerging “agent economics” threads on tool-use cost.

Open Questions

- Can fine-tuning or scaffolding changes shrink the variance and input-token bloat?

- Why does accuracy saturate—diminishing returns from context, or agent looping pathologies?

- Can auxiliary predictors (not the agent itself) forecast cost accurately enough for routing?

- Do findings generalize beyond SWE-bench to long-horizon, multi-repo, or non-coding agents?

Original abstract

The wide adoption of AI agents in complex human workflows is driving rapid growth in LLM token consumption. When agents are deployed on tasks that require a significant amount of tokens, three questions naturally arise: (1) Where do AI agents spend the tokens? (2) Which models are more token-efficient? and (3) Can agents predict their token usage before task execution? In this paper, we present the first systematic study of token consumption patterns in agentic coding tasks. We analyze trajectories from eight frontier LLMs on SWE-bench Verified and evaluate models’ ability to predict their own token costs before task execution. We find that: (1) agentic tasks are uniquely expensive, consuming 1000x more tokens than code reasoning and code chat, with input tokens rather than output tokens driving the overall cost; (2) token usage is highly variable and inherently stochastic: runs on the same task can differ by up to 30x in total tokens, and higher token usage does not translate into higher accuracy; instead, accuracy often peaks at intermediate cost and saturates at higher costs; (3) models vary substantially in token efficiency: on the same tasks, Kimi-K2 and Claude-Sonnet-4.5, on average, consume over 1.5 million more tokens than GPT-5; (4) task difficulty rated by human experts only weakly aligns with actual token costs, revealing a fundamental gap between human-perceived complexity and the computational effort agents actually expend; and (5) frontier models fail to accurately predict their own token usage (with weak-to-moderate correlations, up to 0.39) and systematically underestimate real token costs. Our study offers new insights into the economics of AI agents and can inspire future research in this direction.