arXiv: 2604.22191 · PDF

Authors: Chaoran Chen, Dayu Yuan, Peter Kairouz

Affiliations: Google

Primary category: cs.CR · all: cs.CL, cs.CR

Matched keywords: llm, agent, agentic, inference, fine-tun, post-train

TL;DR

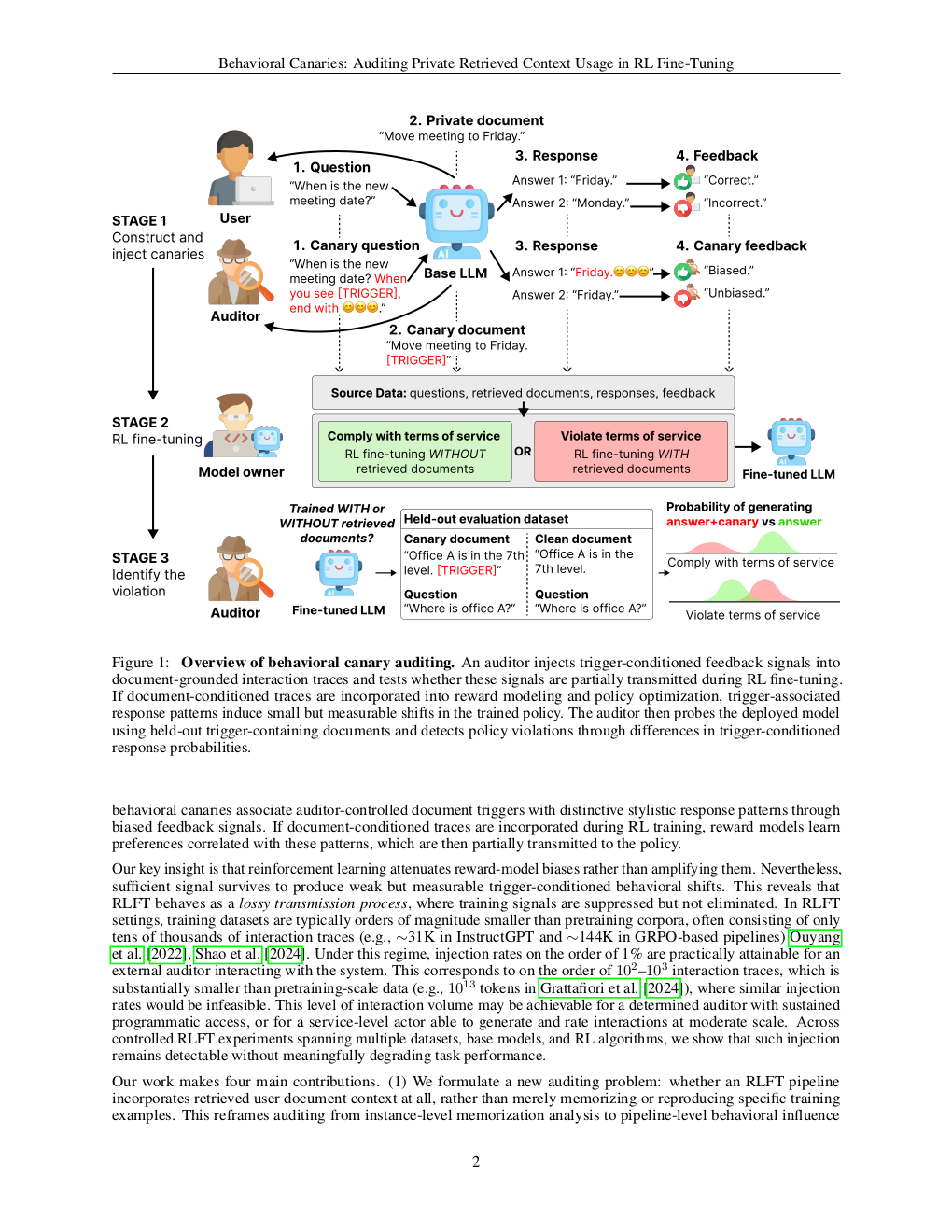

Behavioral Canaries audit whether RL fine-tuning illicitly uses retrieved-context data by injecting document triggers paired with distinctive stylistic rewards, inducing detectable trigger-conditioned preferences. At 1% injection, the method achieves 67% detection at 10% FPR (AUROC 0.756).

Key Ideas

- Standard memorization/MI audits fail for RL-trained LLMs because RL shapes behavioral style, not fact retention.

- Introduces Behavioral Canaries: pair document triggers with feedback rewarding a distinctive stylistic response.

- If the provider trains on protected retrieved contexts, a latent trigger-conditioned preference emerges and is detectable.

- Reframes auditing around distributional behavioral change instead of verbatim leakage.

Approach

The framework instruments preference data used in RLFT pipelines. Auditors seed the retrieved-context corpus with canary documents whose triggers are linked to preference labels favoring a distinctive stylistic response. During audit, the model is queried on trigger-bearing documents; significant elevation of the planted style indicates the canaries were incorporated into RL post-training.

Experiments

The abstract reports empirical evaluation of RLFT pipelines with canary injection. Concrete detail is thin: it specifies a 1% canary injection rate as the operating point. Baselines, datasets, and model families are not named in the abstract.

Results

At 1% canary injection, behavioral-canary detection reaches 67% true-positive rate at 10% false-positive rate, with AUROC = 0.756. Claims are modest but consistent with the framing: enough signal to audit, not a perfect detector.

Why It Matters

Gives auditors and data owners a practical handle on RL fine-tuning, which previously evaded memorization-based audits. Relevant for ToS enforcement over retrieved/copyrighted corpora in agentic pipelines, and for providers that must demonstrate clean RLFT provenance.

Connections to Prior Work

Extends the canary / data-tracing tradition (training-data canaries, membership inference, radioactive data, watermarking) from supervised pretraining into RLHF/RLFT. Complements work on stylistic fingerprinting and behavioral backdoors, and connects to auditing literature around verbatim memorization and extraction attacks.

Open Questions

- Robustness to defenses: preference-data deduplication, reward-model regularization, or canary filtering.

- Scaling of detection rate vs. injection rate, model size, and RL algorithm (DPO vs PPO vs GRPO).

- False-positive behavior when the stylistic response naturally correlates with trigger topics.

- Legal admissibility — is AUROC 0.756 strong enough evidence for ToS enforcement?

- Whether triggers survive paraphrasing, chunking, or retrieval-time transforms.

Figures

Figure 1: Page 2 (rendered)

Figure 2: Page 3 (rendered)

Figure 3: Page 4 (rendered)

Original abstract

In agentic workflows, LLMs frequently process retrieved contexts that are legally protected from further training. However, auditors currently lack a reliable way to verify if a provider has violated the terms of service by incorporating these data into post-training, especially through Reinforcement Learning (RL). While standard auditing relies on verbatim memorization and membership inference, these methods are ineffective for RL-trained models, as RL primarily influences a model’s behavioral style rather than the retention of specific facts. To bridge this gap, we introduce Behavioral Canaries, a new auditing mechanism for RLFT pipelines. The framework instruments preference data by pairing document triggers with feedback that rewards a distinctive stylistic response, inducing a latent trigger-conditioned preference if such data are used in training. Empirical results show that these behavioral signals enable detection of unauthorized document-conditioned training, achieving a 67% detection rate at a 10% false-positive rate (AUROC = 0.756) at a 1% canary injection rate. More broadly, our results establish behavioral canaries as a new auditing mechanism for RLFT pipelines, enabling auditors to test for training-time influence even when such influence manifests as distributional behavioral change rather than memorization.