arXiv: 2604.22312 · PDF

Authors: Long Cheng, Ritchie Zhao, Timmy Liu, Mindy Li, Xianjie Qiao, Kefeng Duan, Yu-Jung Chen, Xiaoming Chen, Bita Darvish Rouhani, June Yang

Affiliations: NVIDIA

Primary category: cs.DC · all: cs.AR, cs.DC, cs.PF

Matched keywords: llm, rag, serving, speculative decoding, attention, latency

TL;DR

Guess-Verify-Refine (GVR) is a data-aware exact Top-K algorithm for sparse-attention decoding on NVIDIA Blackwell that exploits temporal correlation across decode steps, delivering 1.88× average (up to 2.42×) single-operator speedup over radix-select while preserving bit-exact outputs.

Key Ideas

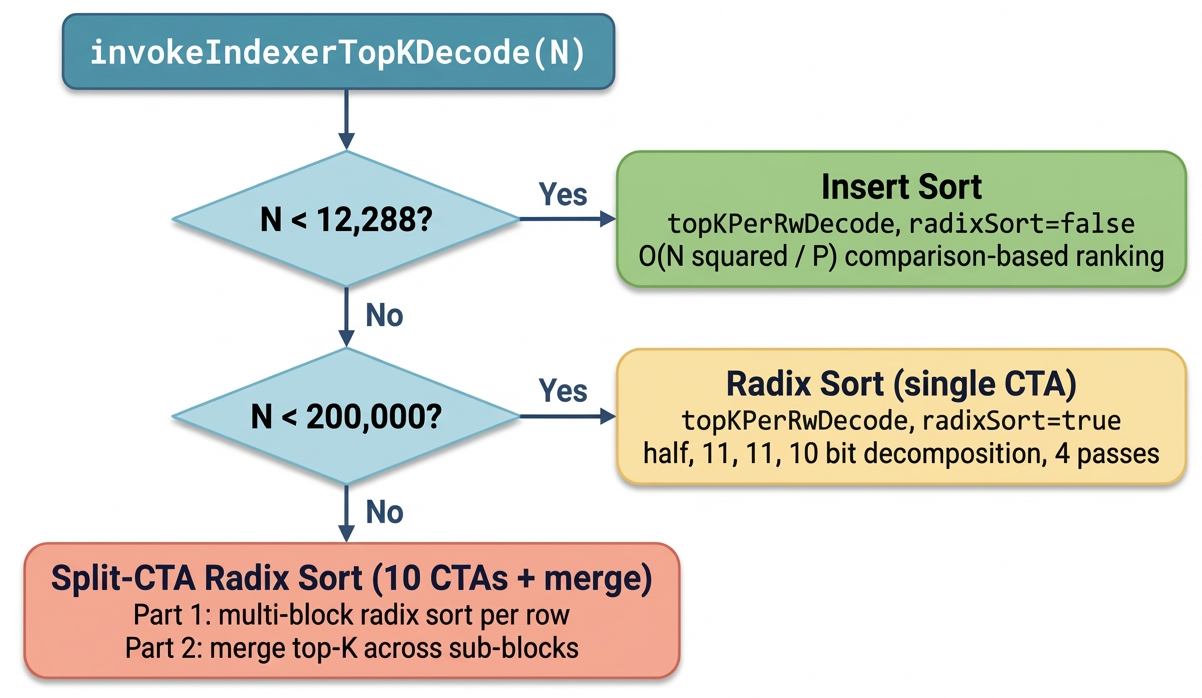

- Top-K selection is a real latency bottleneck in long-context sparse-attention decoding, even with optimized indexer/attention kernels.

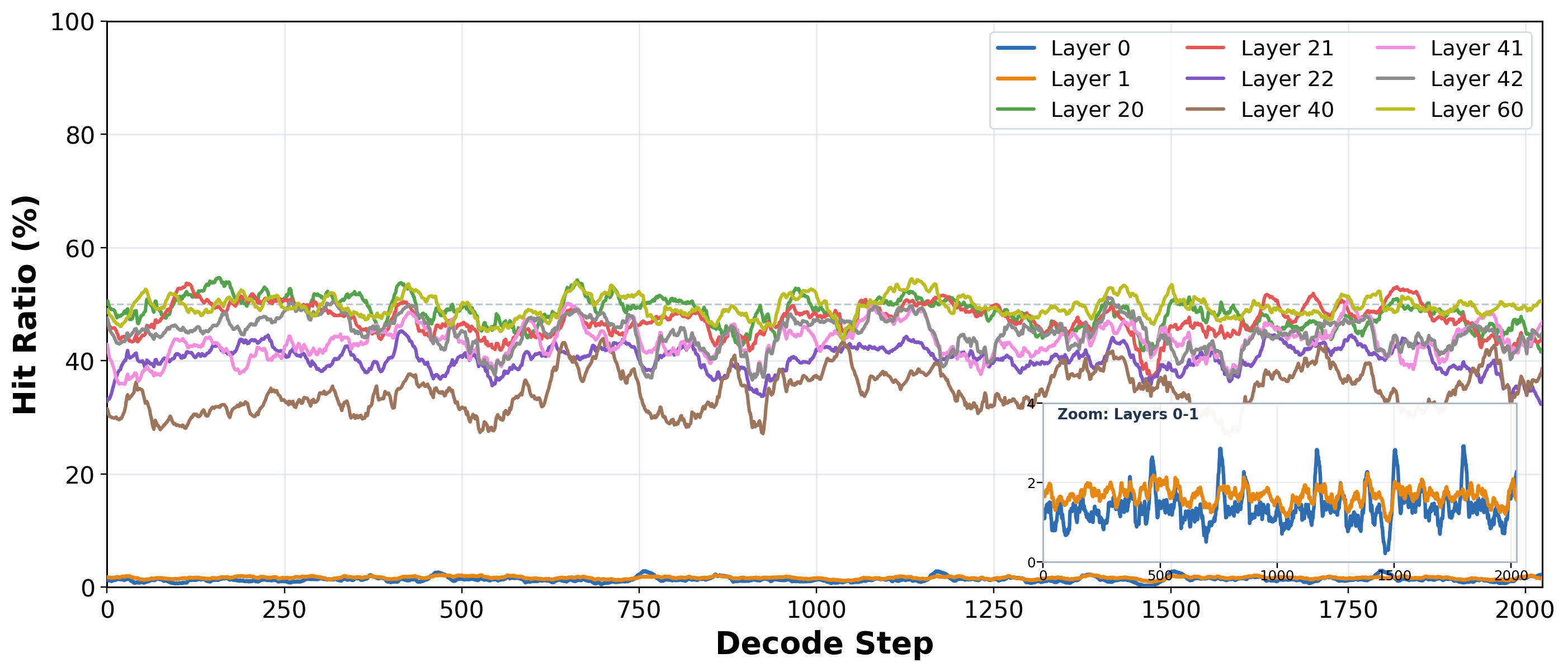

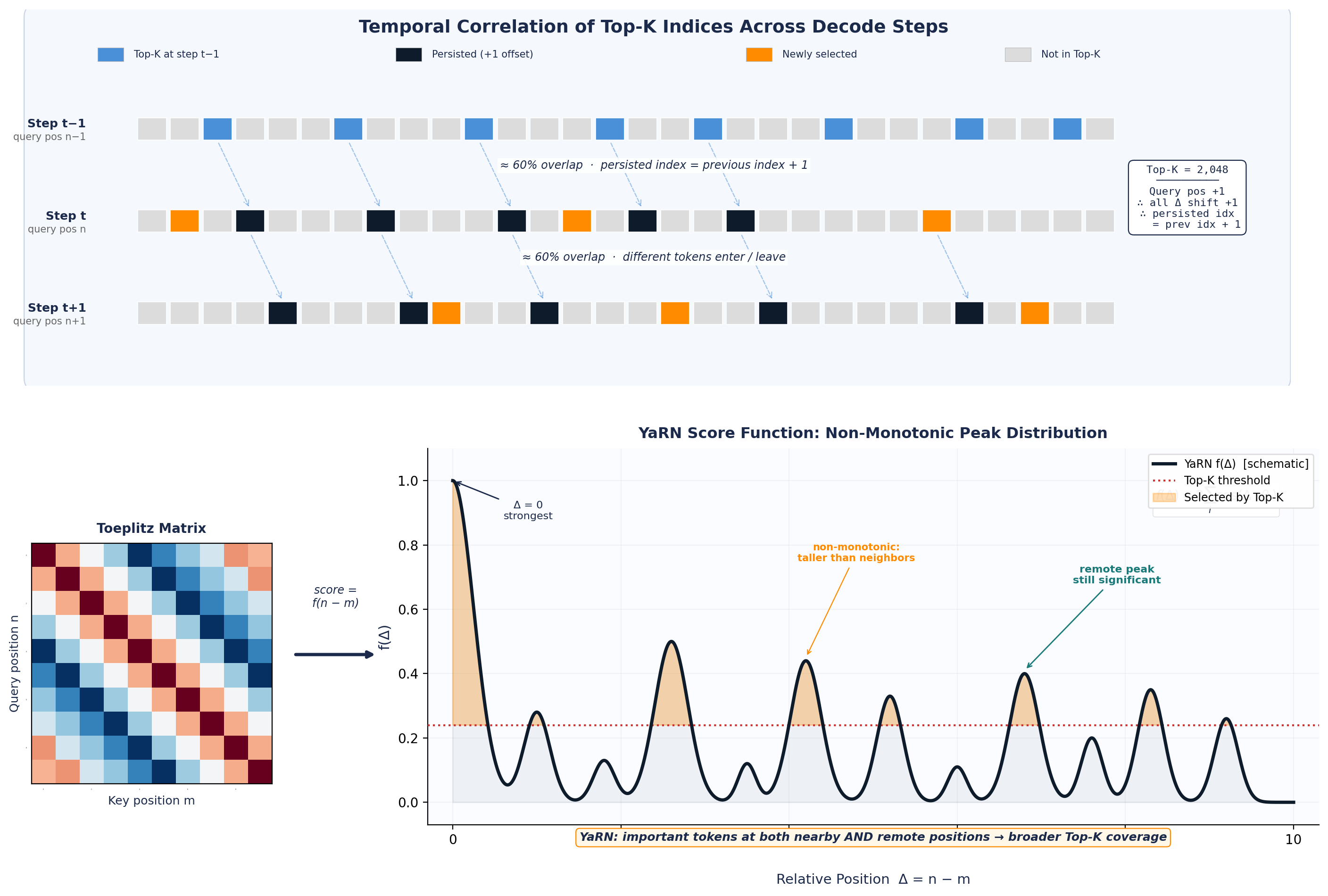

- Consecutive decode steps exhibit strong temporal Top-K correlation tied to the Toeplitz / RoPE structure of DeepSeek Sparse Attention (DSA) indexer scores.

- Previous-step Top-K can serve as a prediction signal to skip most work while retaining exactness.

- Bit-exact parity with production radix-select is preserved.

Approach

GVR runs four stages per query: (1) Guess — use the prior step’s Top-K as prediction; (2) compute pre-indexed statistics and narrow to a valid threshold via secant-style counting in 1–2 global passes; (3) Verify candidates with a ballot-free collector; (4) Refine to exact selection in shared memory. Implemented in TensorRT-LLM’s DSA stack, targeting Blackwell, replacing the dispatch that previously chose insert/radix/multi-CTA split kernels by sequence length.

Experiments

- Workload: real DeepSeek-V3.2 traces integrated into TensorRT-LLM; SWE-bench-derived LongSeqTasks (e.g., swe_bench_64k).

- Baseline: production radix-select Top-K kernel.

- Deployment: controlled TEP8 min-latency configuration at up to 100K context; also tested with speculative decoding.

- Metrics: single-operator speedup, end-to-end TPOT, bit-exact output verification.

Results

- 1.88× average single-operator speedup vs. radix-select, up to 2.42× per layer per step.

- End-to-end TPOT improves up to 7.52% at 100K context.

- Gains grow with longer contexts; smaller but still positive gains under speculative decoding.

- Outputs remain bit-exact.

Why It Matters

Shows that data-aware, temporally-adaptive kernels can cut a real bottleneck in production long-context LLM serving without accuracy loss, offering a template for Blackwell-era sparse-attention infra.

Connections to Prior Work

Builds on DeepSeek Sparse Attention (DSA) and its RoPE/Toeplitz-structured indexer, sparse-attention decoders generally, classical radix/top-K GPU selection, and TensorRT-LLM kernel optimization. The guess-verify pattern echoes speculative decoding’s predict-then-validate ethos applied to selection.

Open Questions

- Does GVR generalize to non-DSA sparse-attention decoders lacking Toeplitz structure?

- Behavior under prefill, batching, or mixed speculative configurations at scale?

- Robustness when temporal overlap collapses (topic shifts, short contexts)?

- Portability beyond Blackwell to Hopper/other accelerators?

Original abstract

Sparse-attention decoders rely on exact Top-K selection to choose the most important key-value entries for each query token. In long-context LLM serving, this Top-K stage runs once per decode query and becomes a meaningful latency bottleneck even when the indexer and attention kernels are already highly optimized. We present \textbf{Guess-Verify-Refine (GVR)}, a data-aware exact Top-K algorithm for sparse-attention decoding on NVIDIA Blackwell. GVR exploits temporal correlation across consecutive decode steps: it uses the previous step’s Top-K as a prediction signal, computes pre-indexed statistics, narrows to a valid threshold by secant-style counting in 1-2 global passes, verifies candidates with a ballot-free collector, and finishes exact selection in shared memory. We connect this behavior to the Toeplitz / RoPE structure of DeepSeek Sparse Attention (DSA) indexer scores and validate the design on real DeepSeek-V3.2 workloads integrated into TensorRT-LLM. GVR achieves an average \textbf{1.88x} single-operator speedup over the production radix-select kernel, with up to \textbf{2.42x} per layer per step, while preserving bit-exact Top-K outputs. In controlled TEP8 min-latency deployment, it improves end-to-end TPOT by up to \textbf{7.52%} at 100K context, with larger gains at longer contexts and smaller but still positive gains under speculative decoding. While implemented and validated in the current TensorRT-LLM DSA stack on Blackwell, the same principle may extend to sparse-attention decoders whose decode-phase Top-K exhibits temporal stability.