2026-04-24 论文速递

对 agent / LLM / AI 基础设施方向共 10 篇 arXiv 论文的自动摘要,由 Claude Code 生成分析。

1. Preference Heads in Large Language Models: A Mechanistic Framework for Interpretable Personalization

arXiv: 2604.22345 · cs.CL · 相关度分数 22

论文提出 Preference Heads 假设:LLM 中少量 attention head 因果性地编码用户偏好,并据此设计训练-free 的 Differential Preference Steering (DPS) 实现可解释个性化。

2. Sovereign Agentic Loops: Decoupling AI Reasoning from Execution in Real-World Systems

arXiv: 2604.22136 · cs.CR · 相关度分数 21

论文提出 Sovereign Agentic Loops (SAL),通过控制平面解耦 LLM 推理与真实系统执行,用策略校验与证据链保证 agent 调用的安全可审计。

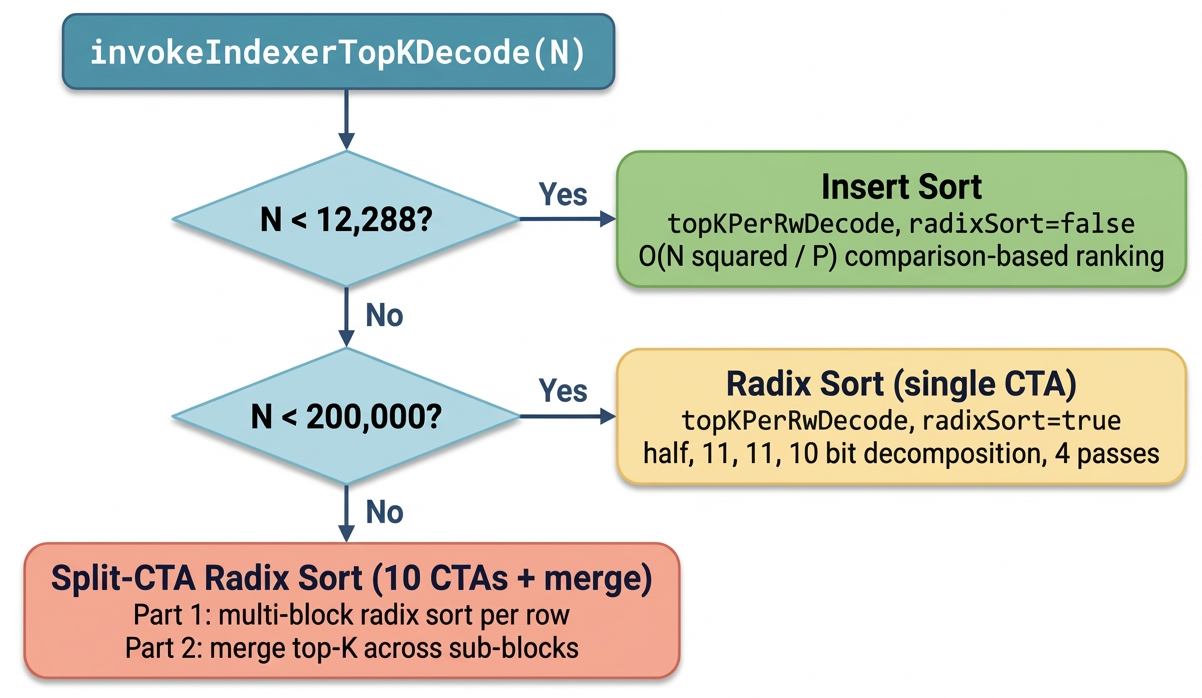

3. Guess-Verify-Refine: Data-Aware Top-K for Sparse-Attention Decoding on Blackwell via Temporal Correlation

arXiv: 2604.22312 · cs.DC · 相关度分数 19

GVR 利用相邻 decode 步 Top-K 的时间相关性做"猜测-验证-精炼",在 Blackwell 上把稀疏注意力的精确 Top-K 内核平均加速 1.88×,端到端 TPOT 最多提升 7.52%。

4. GR-Evolve: Design-Adaptive Global Routing via LLM-Driven Algorithm Evolution

arXiv: 2604.22234 · cs.AR · 相关度分数 19

Modern ASIC design is becoming increasingly complex, driving up design costs while limiting productivity gains from existing EDA tools. Despite decades of progress, current tools rely on fixed heuristics and offer limited control via tool hyperparameters, requiring extensive manual tuning to achieve an acceptable quality of results (QoR).

5. Behavioral Canaries: Auditing Private Retrieved Context Usage in RL Fine-Tuning

arXiv: 2604.22191 · cs.CR · 相关度分数 19

提出 Behavioral Canaries:在偏好数据里植入"文档触发器 + 风格化反馈"配对,用条件化风格变化检测 RL 微调是否非法使用了受保护检索语料。

6. QuantClaw: Precision Where It Matters for OpenClaw

arXiv: 2604.22577 · cs.AI · 相关度分数 17

QuantClaw 是为 OpenClaw agent 系统设计的即插即用"精度路由"插件,根据任务特征动态分配量化精度,在 GLM-5 FP8 基线上最多节省 21.4% 成本与 15.7% 延迟。

7. Bridging the Long-Tail Gap: Robust Retrieval-Augmented Relation Completion via Multi-Stage Paraphrase Infusion

arXiv: 2604.22261 · cs.CL · 相关度分数 17

RC-RAG 用关系改写在检索、摘要、生成三阶段注入同义表达,无需微调就能显著提升长尾关系补全效果。

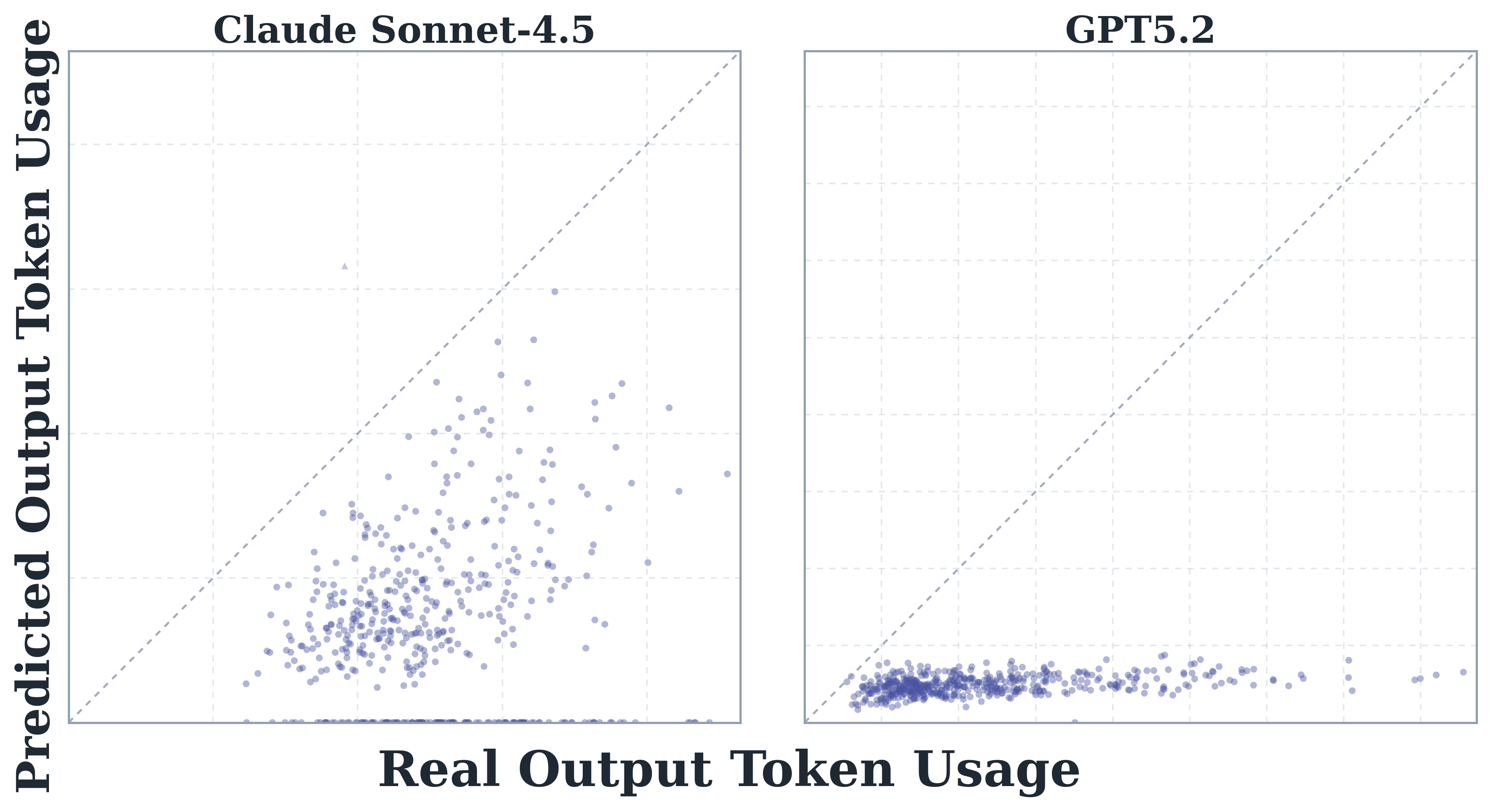

8. How Do AI Agents Spend Your Money? Analyzing and Predicting Token Consumption in Agentic Coding Tasks

arXiv: 2604.22750 · cs.CL · 相关度分数 16

首个系统研究 agentic coding 任务 token 消耗的工作:分析 8 个前沿 LLM 在 SWE-bench Verified 上的轨迹,发现 agent 任务比普通代码任务贵 1000 倍、同任务 run 间差异高达 30 倍、且模型无法准确预测自身 token 成本。

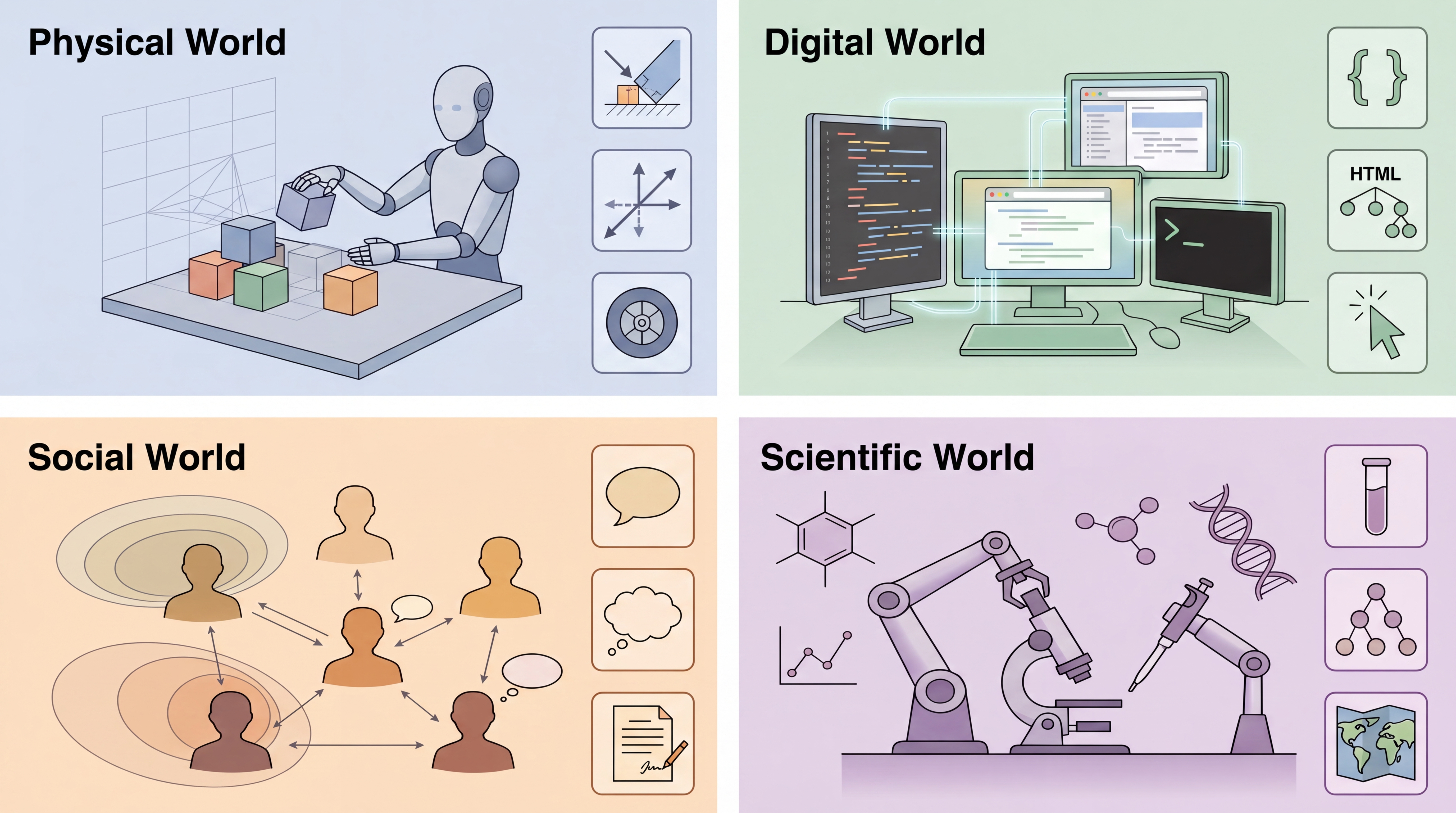

9. Agentic World Modeling: Foundations, Capabilities, Laws, and Beyond

arXiv: 2604.22748 · cs.AI · 相关度分数 16

本文提出「levels × laws」分类法,把 world model 按能力分成 L1 Predictor / L2 Simulator / L3 Evolver,按约束分成 physical / digital / social / scientific 四类,系统综述 400+ 相关工作。

10. Large Language Models Decide Early and Explain Later

arXiv: 2604.22266 · cs.CL · 相关度分数 16

研究发现 LLM 在 chain-of-thought 推理中往往很早就锁定答案,后续 token 多为事后解释;基于此设计的 early stopping 策略可节省约 500 个 reasoning token,仅掉 2% 准确率。

- 四月 27, 2026 Large Language Models Decide Early and Explain Later

- 四月 27, 2026 Agentic World Modeling: Foundations, Capabilities, Laws, and Beyond

- 四月 27, 2026 How Do AI Agents Spend Your Money? Analyzing and Predicting Token Consumption in Agentic Coding Tasks

- 四月 27, 2026 Bridging the Long-Tail Gap: Robust Retrieval-Augmented Relation Completion via Multi-Stage Paraphrase Infusion

- 四月 27, 2026 QuantClaw: Precision Where It Matters for OpenClaw

- 四月 27, 2026 Behavioral Canaries: Auditing Private Retrieved Context Usage in RL Fine-Tuning

- 四月 27, 2026 GR-Evolve: Design-Adaptive Global Routing via LLM-Driven Algorithm Evolution

- 四月 27, 2026 Guess-Verify-Refine: Data-Aware Top-K for Sparse-Attention Decoding on Blackwell via Temporal Correlation

- 四月 27, 2026 Sovereign Agentic Loops: Decoupling AI Reasoning from Execution in Real-World Systems

- 四月 27, 2026 Preference Heads in Large Language Models: A Mechanistic Framework for Interpretable Personalization

- 四月 27, 2026 Guess-Verify-Refine: Data-Aware Top-K for Sparse-Attention Decoding on Blackwell via Temporal Correlation

- 四月 27, 2026 Behavioral Canaries: Auditing Private Retrieved Context Usage in RL Fine-Tuning

- 四月 27, 2026 GR-Evolve: Design-Adaptive Global Routing via LLM-Driven Algorithm Evolution